Mistral AI which is a Paris-based open-source mannequin startup has challenged norms by releasing its newest giant language mannequin (LLM), MoE 8x7B, via a easy torrent link. This contrasts Google’s conventional strategy with their Gemini launch, sparking conversations and pleasure inside the AI group.

Mistral AI’s strategy to releases has all the time been unconventional. Usually foregoing the same old accompaniments of papers, blogs, or press releases, their technique has been uniquely efficient in capturing the AI group’s consideration.

Just lately, the corporate achieved a outstanding $2 billion valuation following a funding spherical led by Andreessen Horowitz. This funding spherical was historic, setting a file with a $118 million seed spherical, the biggest in European historical past. Past funding successes, Mistral AI’s energetic involvement in discussions across the EU AI Act, advocating for lowered regulation in open-source AI.

Why MoE 8x7B is Drawing Consideration

Described as a “scaled-down GPT-4,” Mixtral 8x7B makes use of a Combination of Consultants (MoE) framework with eight specialists. Every professional have 111B parameters, coupled with 55B shared consideration parameters, to offer a whole of 166B parameters per mannequin. This design selection is important because it permits for under two specialists to be concerned within the inference of every token, highlighting a shift in direction of extra environment friendly and centered AI processing.

One of many key highlights of Mixtral is its potential to handle an intensive context of 32,000 tokens, offering ample scope for dealing with complicated duties. The mannequin’s multilingual capabilities embody strong help for English, French, Italian, German, and Spanish, catering to a world developer group.

The pre-training of Mixtral includes knowledge sourced from the open Internet, with a simultaneous coaching strategy for each specialists and routers. This methodology ensures that the mannequin is not only huge in its parameter area but additionally finely tuned to the nuances of the huge knowledge it has been uncovered to.

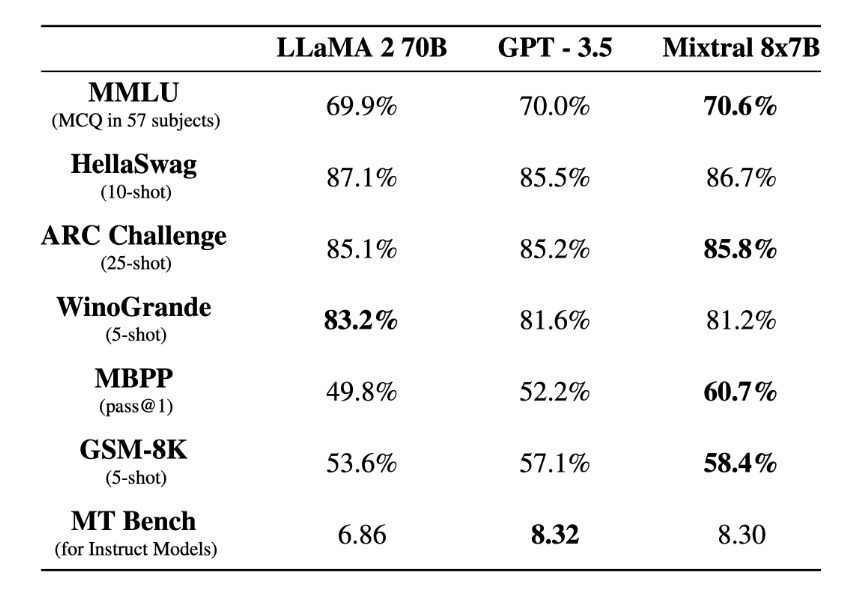

Mixtral 8x7B achieves a powerful rating

Mixtral 8x7B outperforms LLaMA 2 70B and rivaling GPT-3.5, particularly notable within the MBPP activity with a 60.7% success price, considerably greater than its counterparts. Even within the rigorous MT-Bench tailor-made for instruction-following fashions, Mixtral 8x7B achieves a powerful rating, almost matching GPT-3.5

Understanding the Combination of Consultants (MoE) Framework

The Combination of Consultants (MoE) mannequin, whereas gaining current consideration resulting from its incorporation into state-of-the-art language fashions like Mistral AI’s MoE 8x7B, is definitely rooted in foundational ideas that date again a number of years. Let’s revisit the origins of this concept via seminal analysis papers.

The Idea of MoE

Combination of Consultants (MoE) represents a paradigm shift in neural community structure. In contrast to conventional fashions that use a singular, homogeneous community to course of all sorts of knowledge, MoE adopts a extra specialised and modular strategy. It consists of a number of ‘professional’ networks, every designed to deal with particular sorts of knowledge or duties, overseen by a ‘gating community’ that dynamically directs enter knowledge to probably the most acceptable professional.

A Combination of Consultants (MoE) layer embedded inside a recurrent language mannequin (Source)

The above picture presents a high-level view of an MoE layer embedded inside a language mannequin. At its essence, the MoE layer contains a number of feed-forward sub-networks, termed ‘specialists,’ every with the potential to focus on processing completely different elements of the info. A gating community, highlighted within the diagram, determines which mixture of those specialists is engaged for a given enter. This conditional activation permits the community to considerably improve its capability with out a corresponding surge in computational demand.

Performance of the MoE Layer

In follow, the gating community evaluates the enter (denoted as G(x) within the diagram) and selects a sparse set of specialists to course of it. This choice is modulated by the gating community’s outputs, successfully figuring out the ‘vote’ or contribution of every professional to the ultimate output. For instance, as proven within the diagram, solely two specialists could also be chosen for computing the output for every particular enter token, making the method environment friendly by concentrating computational sources the place they’re most wanted.

Transformer Encoder with MoE Layers (Source)

The second illustration above contrasts a standard Transformer encoder with one augmented by an MoE layer. The Transformer structure, extensively identified for its efficacy in language-related duties, historically consists of self-attention and feed-forward layers stacked in sequence. The introduction of MoE layers replaces a few of these feed-forward layers, enabling the mannequin to scale with respect to capability extra successfully.

Within the augmented mannequin, the MoE layers are sharded throughout a number of units, showcasing a model-parallel strategy. That is important when scaling to very giant fashions, because it permits for the distribution of the computational load and reminiscence necessities throughout a cluster of units, reminiscent of GPUs or TPUs. This sharding is important for coaching and deploying fashions with billions of parameters effectively, as evidenced by the coaching of fashions with a whole bunch of billions to over a trillion parameters on large-scale compute clusters.

The Sparse MoE Method with Instruction Tuning on LLM

The paper titled “Sparse Mixture-of-Experts (MoE) for Scalable Language Modeling” discusses an progressive strategy to enhance Massive Language Fashions (LLMs) by integrating the Combination of Consultants structure with instruction tuning methods.

It highlights a standard problem the place MoE fashions underperform in comparison with dense fashions of equal computational capability when fine-tuned for particular duties resulting from discrepancies between normal pre-training and task-specific fine-tuning.

Instruction tuning is a coaching methodology the place fashions are refined to higher comply with pure language directions, successfully enhancing their activity efficiency. The paper means that MoE fashions exhibit a notable enchancment when mixed with instruction tuning, extra so than their dense counterparts. This method aligns the mannequin’s pre-trained representations to comply with directions extra successfully, resulting in vital efficiency boosts.

The researchers performed research throughout three experimental setups, revealing that MoE fashions initially underperform in direct task-specific fine-tuning. Nonetheless, when instruction tuning is utilized, MoE fashions excel, significantly when additional supplemented with task-specific fine-tuning. This implies that instruction tuning is a crucial step for MoE fashions to outperform dense fashions on downstream duties.

The impact of instruction tuning on MOE

It additionally introduces FLAN-MOE32B, a mannequin that demonstrates the profitable utility of those ideas. Notably, it outperforms FLAN-PALM62B, a dense mannequin, on benchmark duties whereas utilizing solely one-third of the computational sources. This showcases the potential for sparse MoE fashions mixed with instruction tuning to set new requirements for LLM effectivity and efficiency.

Implementing Combination of Consultants in Actual-World Eventualities

The flexibility of MoE fashions makes them ideally suited for a spread of functions:

- Pure Language Processing (NLP): MoE fashions can deal with the nuances and complexities of human language extra successfully, making them ideally suited for superior NLP duties.

- Picture and Video Processing: In duties requiring high-resolution processing, MoE can handle completely different elements of photos or video frames, enhancing each high quality and processing pace.

- Customizable AI Options: Companies and researchers can tailor MoE fashions to particular duties, resulting in extra focused and efficient AI options.

Challenges and Concerns

Whereas MoE fashions supply quite a few advantages, in addition they current distinctive challenges:

- Complexity in Coaching and Tuning: The distributed nature of MoE fashions can complicate the coaching course of, requiring cautious balancing and tuning of the specialists and gating community.

- Useful resource Administration: Effectively managing computational sources throughout a number of specialists is essential for maximizing the advantages of MoE fashions.

Incorporating MoE layers into neural networks, particularly within the area of language fashions, affords a path towards scaling fashions to sizes beforehand infeasible resulting from computational constraints. The conditional computation enabled by MoE layers permits for a extra environment friendly distribution of computational sources, making it attainable to coach bigger, extra succesful fashions. As we proceed to demand extra from our AI programs, architectures just like the MoE-equipped Transformer are more likely to develop into the usual for dealing with complicated, large-scale duties throughout varied domains.