New analysis from Canada presents a potential methodology by which attackers might steal the fruits of pricey machine studying frameworks, even when the one entry to a proprietary system is by way of a extremely sanitized and apparently well-defended API (an interface or protocol that processes person queries server-side, and returns solely the output response).

Because the analysis sector appears more and more in the direction of monetizing pricey mannequin coaching by means of Machine Studying as a Service (MLaaS) implementations, the brand new work means that Self-Supervised Learning (SSL) fashions are extra weak to this sort of mannequin exfiltration, as a result of they’re educated with out person labels, simplifying extraction, and usually present outcomes that comprise an excessive amount of helpful info for somebody wishing to duplicate the (hidden) supply mannequin.

In ‘black field’ check simulations (the place the researchers granted themselves no extra entry to an area ‘sufferer’ mannequin than a typical end-user would have by way of an online API), the researchers have been in a position to replicate the goal techniques with comparatively low sources:

‘[Our] assaults can steal a replica of the sufferer mannequin that achieves appreciable downstream efficiency in fewer than 1/5 of the queries used to coach the sufferer. Towards a sufferer mannequin educated on 1.2M unlabeled samples from ImageNet, with a 91.9% accuracy on the downstream Style-MNIST classification job, our direct extraction assault with the InfoNCE loss stole a replica of the encoder that achieves 90.5% accuracy in 200K queries.

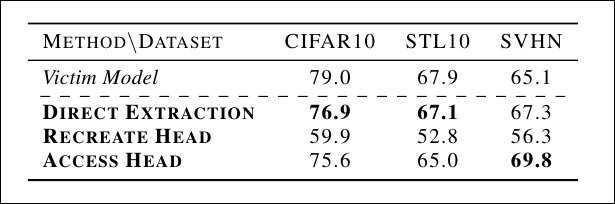

‘Equally, towards a sufferer educated on 50K unlabeled samples from CIFAR10, with a 79.0% accuracy on the downstream CIFAR10 classification job, our direct extraction assault with the SoftNN loss stole a replica that achieves 76.9% accuracy in 9,000 queries.’

The researchers used three assault strategies, discovering that ‘Direct Extraction’ was the simplest. These fashions have been stolen from a regionally recreated CIFAR10 sufferer encoder utilizing 9,000 queries from the CIFAR10 test-set. Supply: https://arxiv.org/pdf/2205.07890.pdf

The researchers be aware additionally that strategies that are suited to guard supervised fashions from assault don’t adapt properly to fashions educated on an unsupervised foundation – although such fashions signify a number of the most anticipated and celebrated fruits of the picture synthesis sector.

The brand new paper is titled On the Issue of Defending Self-Supervised Studying towards Mannequin Extraction, and comes from the College of Toronto and the Vector Institute for Synthetic Intelligence.

Self-Consciousness

In Self-Supervised Studying, a mannequin is educated on unlabeled information. With out labels, an SSL mannequin should study associations and teams from the implicit construction of the information, looking for related aspects of information and step by step corralling these aspects into nodes, or representations.

The place an SSL strategy is viable, it’s extremely productive, because it bypasses the necessity for costly (typically outsourced and controversial) categorization by crowdworkers, and primarily rationalizes the information autonomously.

The three SSL approaches thought-about by the brand new paper’s authors are SimCLR, a Siamese Network; SimSiam, one other Siamese Community centered on illustration studying; and Barlow Twins, an SSL strategy that achieved state-of-the-art ImageNet classifier efficiency on its launch in 2021.

Mannequin extraction for labeled information (i.e. a mannequin educated by means of supervised studying) is a comparatively well-documented analysis space. It’s additionally simpler to defend towards, because the attacker should receive the labels from the sufferer mannequin with a purpose to recreate it.

From a earlier paper, a ‘knockoff classifier’ assault mannequin towards a supervised studying structure. Supply: https://arxiv.org/pdf/1812.02766.pdf

With out white-box entry, this isn’t a trivial job, because the typical output from an API request to such a mannequin incorporates much less info than with a typical SSL API.

From the paper*:

‘Previous work on mannequin extraction targeted on the Supervised Studying (SL) setting, the place the sufferer mannequin usually returns a label or different low-dimensional outputs like confidence scores or logits.

‘In distinction, SSL encoders return high-dimensional representations; the de facto output for a ResNet-50 Sim-CLR mannequin, a well-liked structure in imaginative and prescient, is a 2048-dimensional vector.

‘We hypothesize this considerably greater info leakage from encoders makes them extra weak to extraction assaults than SL fashions.’

Structure and Information

The researchers examined three approaches to SSL mannequin inference/extraction: Direct Extraction, through which the API output is in comparison with a recreated encoder’s output by way of an apposite loss operate reminiscent of Imply Squared Error (MSE); recreating the projection head, the place a vital analytical performance of the mannequin, usually discarded earlier than deployment, is reassembled and utilized in a reproduction mannequin; and accessing the projection head, which is barely potential in circumstances the place the unique builders have made the structure out there.

In methodology #1, Direct Extraction, the output of the sufferer mannequin is in comparison with the output of an area mannequin; methodology #2 entails recreating the projection head used within the authentic coaching structure (and normally not included in a deployed mannequin).

The researchers discovered that Direct Extraction was the simplest methodology for acquiring a purposeful duplicate of the goal mannequin, and has the additional advantage of being probably the most troublesome to characterize as an ‘assault’ (as a result of it primarily behaves little in another way than a typical and legitimate finish person).

The authors educated sufferer fashions on three picture datasets: CIFAR10, ImageNet, and Stanford’s Road View Home Numbers (SVHN). ImageNet was educated on ResNet50, whereas CIFAR10 and SVHN have been educated on ResNet18 and ResNet24 over a freely out there PyTorch implementation of SimCLR.

The fashions’ downstream (i.e. deployed) efficiency was examined towards CIFAR100, STL10, SVHN, and Fashion-MNIST. The researchers additionally experimented with extra ‘white field’ strategies of mannequin appropriation, although it transpired that Direct Extraction, the least privileged strategy, yielded the very best outcomes.

To judge the representations being inferred and replicated within the assaults, the authors added a linear prediction layer to the mannequin, which was fine-tuned on the total labeled coaching set from the next (downstream) job, with the remainder of the community layers frozen. On this approach, the check accuracy on the prediction layer can operate as a metric for efficiency. Because it contributes nothing to the inference course of, this doesn’t signify ‘white field’ performance.

Outcomes on the check runs, made potential by the (non-contributing) Linear Analysis layer. Accuracy scores in daring.

Commenting on the outcomes, the researchers state:

‘We discover that the direct goal of imitating the sufferer’s representations offers excessive efficiency on downstream duties regardless of the assault requiring solely a fraction (lower than 15% in sure circumstances) of the variety of queries wanted to coach the stolen encoder within the first place.’

And proceed:

‘[It] is difficult to defend encoders educated with SSL because the output representations leak a considerable quantity of data. Essentially the most promising defenses are reactive strategies, reminiscent of watermarking, that may embed particular augmentations in high-capacity encoders.’

* My conversion of the paper’s inline citations to hyperlinks.

First revealed 18th Might 2022.