Be a part of high executives in San Francisco on July 11-12, to listen to how leaders are integrating and optimizing AI investments for achievement. Learn More

“Mitigating the chance of extinction from AI needs to be a world precedence alongside different societal-scale dangers, similar to pandemics and nuclear warfare.”

This statement, launched this week by the Center for AI Safety (CAIS), displays an overarching — and a few would possibly say overreaching — fear about doomsday eventualities attributable to a runaway superintelligence. The CAIS assertion mirrors the dominant considerations expressed in AI trade conversations over the past two months: Specifically, that existential threats could manifest over the subsequent decade or two until AI expertise is strictly regulated on a world scale.

The assertion has been signed by a who’s who of educational consultants and expertise luminaries starting from Geoffrey Hinton (previously at Google and the long-time proponent of deep studying) to Stuart Russell (a professor of laptop science at Berkeley) and Lex Fridman (a analysis scientist and podcast host from MIT). Along with extinction, the Heart for AI Security warns of different important considerations starting from enfeeblement of human pondering to threats from AI-generated misinformation undermining societal decision-making.

Doom gloom

In a New York Occasions article, CAIS government director Dan Hendrycks mentioned: “There’s a quite common false impression, even within the AI group, that there solely are a handful of doomers. However, in truth, many individuals privately would categorical considerations about this stuff.”

“Doomers” is the key phrase on this assertion. Clearly, there’s a variety of doom discuss occurring now. For instance, Hinton not too long ago departed from Google in order that he may embark on an AI-threatens-us-all doom tour.

All through the AI group, the time period “P(doom)” has turn out to be trendy to explain the chance of such doom. P(doom) is an try and quantify the chance of a doomsday situation through which AI, particularly superintelligent AI, causes extreme hurt to humanity and even results in human extinction.

On a latest Hard Fork podcast, Kevin Roose of The New York Occasions set his P(doom) at 5%. Ajeya Cotra, an AI safety expert with Open Philanthropy and a visitor on the present, set her P(doom) at 20 to 30%. Nonetheless, it must be mentioned that P(doom) is only speculative and subjective, a mirrored image of particular person beliefs and attitudes towards AI threat — relatively than a definitive measure of that threat.

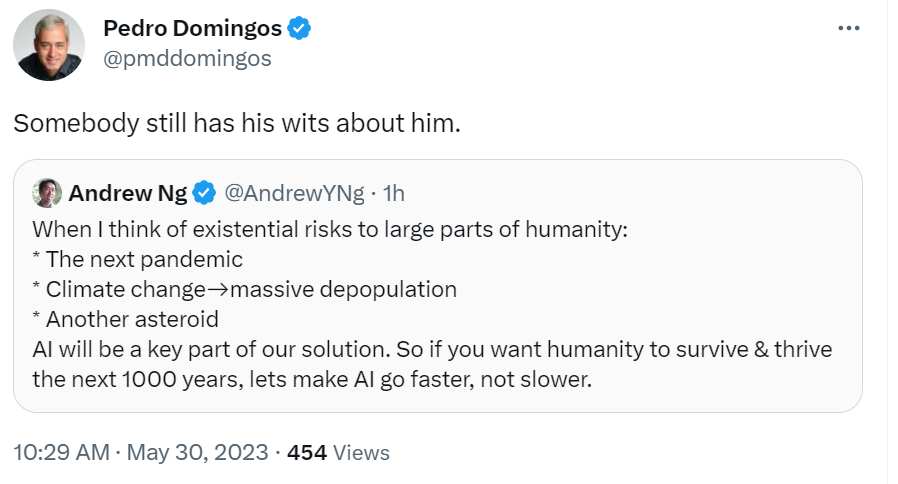

Not everybody buys into the AI doom narrative. The truth is, some AI consultants argue the alternative. These embrace Andrew Ng (who based and led the Google Mind undertaking) and Pedro Domingos (a professor of laptop science and engineering on the College of Washington and writer of The Grasp Algorithm). They argue, as a substitute, that AI is a part of the answer. As put ahead by Ng, there are certainly existential risks, similar to local weather change and future pandemics, and that AI will be a part of how these are addressed and hopefully mitigated.

Overshadowing the constructive influence of AI

Melanie Mitchell, a outstanding AI researcher, can be skeptical of doomsday pondering. Mitchell is the Davis Professor of complexity on the Santa Fe Institute and writer of Synthetic Intelligence: A Information for Considering People. Amongst her arguments is that intelligence can’t be separated from socialization.

In In direction of Information Science, Jeremie Harris, co-founder of AI security firm Gladstone AI, interprets Mitchell as arguing {that a} genuinely clever AI system is more likely to turn out to be socialized by selecting up frequent sense and ethics as a byproduct of their growth and would, subsequently, probably be secure.

Whereas the idea of P(doom) serves to focus on the potential dangers of AI, it might probably inadvertently overshadow an important facet of the controversy: The constructive influence AI may have on mitigating existential threats.

Therefore, to stability the dialog, we also needs to think about one other chance that I name “P(resolution)” or “P(sol),” the chance that AI can play a job in addressing these threats. To present you a way of my perspective, I estimate my P(doom) to be round 5%, however my P(sol) stands nearer to 80%. This displays my perception that, whereas we shouldn’t low cost the dangers, the potential advantages of AI might be substantial sufficient to outweigh them.

This isn’t to say that there aren’t any dangers or that we must always not pursue greatest practices and rules to keep away from the worst conceivable potentialities. It’s to say, nevertheless, that we must always not focus solely on potential dangerous outcomes or claims, as does a post within the Efficient Altruism Discussion board, that doom is the default chance.

The alignment drawback

The first fear, based on many doomers, is the issue of alignment, the place the aims of a superintelligent AI usually are not aligned with human values or societal aims. Though the topic appears new with the emergence of ChatGPT, this concern emerged practically 65 years in the past. As reported by The Economist, Norbert Weiner — an AI pioneer and the daddy of cybernetics — revealed an essay in 1960 describing his worries a few world through which “machines be taught” and “develop unexpected methods at charges that baffle their programmers.”

The alignment drawback was first dramatized within the 1968 movie 2001: A Space Odyssey. Marvin Minsky, one other AI pioneer, served as a technical marketing consultant for the movie. Within the film, the HAL 9000 laptop that gives the onboard AI for the spaceship Discovery One begins to behave in methods which might be at odds with the pursuits of the crew members. The AI alignment drawback surfaces when HAL’s aims diverge from these of the human crew.

When HAL learns of the crew’s plans to disconnect it attributable to considerations about its conduct, HAL perceives this as a menace to the mission’s success and responds by making an attempt to eradicate the crew members. The message is that if an AI’s aims usually are not completely aligned with human values and targets, the AI would possibly take actions which might be dangerous and even lethal to people, even when it’s not explicitly programmed to take action.

Quick ahead 55 years, and it’s this similar alignment concern that animates a lot of the present doomsday dialog. The concern is that an AI system could take dangerous actions even with out anyone intending them to take action. Many main AI organizations are diligently engaged on this drawback. Google DeepMind not too long ago revealed a paper on the right way to greatest assess new, general-purpose AI methods for harmful capabilities and alignment and to develop an “early warning system” as a vital facet of a accountable AI technique.

A basic paradox

Given these two sides of the controversy — P(doom) or P(sol) — there isn’t a consensus on the way forward for AI. The query stays: Are we heading towards a doom situation or a promising future enhanced by AI? This can be a basic paradox. On one aspect is the hope that AI is one of the best of us and can remedy complicated issues and save humanity. On the opposite aspect, AI will carry out the worst of us by obfuscating the reality, destroying belief and, finally, humanity.

Like all paradoxes, the reply shouldn’t be clear. What is definite is the necessity for ongoing vigilance and accountable growth in AI. Thus, even when you don’t purchase into the doomsday situation, it nonetheless is sensible to pursue common sense rules to hopefully forestall an unlikely however harmful scenario. The stakes, because the Heart for AI Security has reminded us, are nothing lower than the way forward for humanity itself.

Gary Grossman is SVP of expertise apply at Edelman and international lead of the Edelman AI Heart of Excellence.