Introduction to Random Forest Algorithm

Within the discipline of knowledge analytics, each algorithm has a value. But when we take into account the general state of affairs, then a most of the enterprise downside has a classification process. It turns into fairly tough to intuitively know what to undertake contemplating the character of the information. Random Forests have numerous purposes throughout domains comparable to finance, healthcare, advertising and marketing, and extra. They’re extensively used for duties like fraud detection, buyer churn prediction, picture classification, and inventory market forecasting.

However at this time we will probably be discussing one of many high classifier strategies, which is essentially the most trusted by information specialists and that’s Random Forest Classifier. Random Forest additionally has a regression algorithm approach which will probably be coated right here.

If you wish to study in-depth, do try our random forest course without spending a dime at Nice Studying Academy. Understanding the significance of tree-based classifiers, this course has been curated on tree-based classifiers which can aid you perceive resolution timber, random forests, and how one can implement them in Python.

The phrase ‘Forest’ within the time period suggests that it’s going to comprise lots of timber. The algorithm comprises a bundle of resolution timber to make a classification and it’s also thought-about a saving approach in relation to overfitting of a call tree mannequin. A call tree mannequin has excessive variance and low bias which may give us fairly unstable output in contrast to the generally adopted logistic regression, which has excessive bias and low variance. That’s the solely level when Random Forest involves the rescue. However earlier than discussing Random Forest intimately, let’s take a fast take a look at the tree idea.

“A call tree is a classification in addition to a regression approach. It really works nice in relation to taking selections on information by creating branches from a root, that are primarily the situations current within the information, and offering an output referred to as a leaf.”

For extra particulars, we have now a complete article on totally different subject on Determination Tree so that you can learn.

In the true world, a forest is a mixture of timber and within the machine studying world, a Random forest is a mixture /ensemble of Determination Bushes.

So, allow us to perceive what a call tree is earlier than we mix it to create a forest.

Think about you’ll make a significant expense, say purchase a automobile. assuming you’d wish to get the perfect mannequin that matches your price range, you wouldn’t simply stroll right into a showroom and stroll out fairly drive out together with your automobile. Is it that so?

So, Let’s assume you wish to purchase a automobile for 4 adults and a couple of kids, you favor an SUV with most gas effectivity, you favor slightly luxurious like good audio system, sunroof, cosy seating and say you’ve gotten shortlisted fashions A and B.

Mannequin A is really useful by your pal X as a result of the audio system are good, and the gas effectivity is the perfect.

Mannequin B is really useful by your pal Y as a result of it has 6 comfy seats, audio system are good and the sunroof is nice, the gas effectivity is low, however he feels the opposite options persuade her that it’s the finest.

Mannequin B is really useful by your pal Z as nicely as a result of it has 6 comfy seats, audio system are higher and the sunroof is nice, the gas effectivity is nice in her score.

It is rather possible that you’d go along with Mannequin B as you’ve gotten majority voting to this mannequin from your pals. Your folks have voted contemplating the options of their alternative and a call mannequin based mostly on their very own logic.

Think about your pals X, Y, Z as resolution timber, you created a random forest with few resolution timber and based mostly on the outcomes, you selected the one which was really useful by the bulk.

That is how a classifier Random forest works.

What’s Random Forest?

Definition from Wikipedia

Random forests or random resolution forests are an ensemble studying methodology for classification, regression and different duties that operates by establishing a mess of resolution timber at coaching time. For classification duties, the output of the random forest is the category chosen by most timber. For regression duties, the imply or common prediction of the person timber is returned.

Random Forest Options

Some fascinating information about Random Forests – Options

- Accuracy of Random forest is usually very excessive

- Its effectivity is especially Notable in Massive Information units

- Offers an estimate of necessary variables in classification

- Forests Generated will be saved and reused

- In contrast to different fashions It does nt overfit with extra options

How random forest works?

Let’s Get it working

A random forest is a group of Determination Bushes, Every Tree independently makes a prediction, the values are then averaged (Regression) / Max voted (Classification) to reach on the remaining worth.

The power of this mannequin lies in creating totally different timber with totally different sub-features from the options. The Options chosen for every tree is Random, so the timber don’t get deep and are targeted solely on the set of options.

Lastly, when they’re put collectively, we create an ensemble of Determination Bushes that gives a well-learned prediction.

An Illustration on constructing a Random Forest

Allow us to now construct a Random Forest Mannequin for say shopping for a automobile

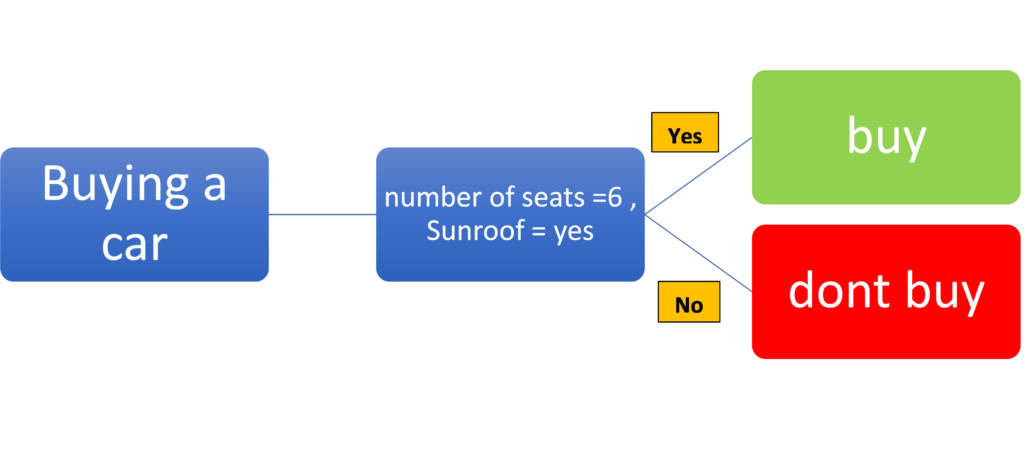

One of many resolution timber may very well be checking for options comparable to Variety of Seats and Sunroof availability and deciding sure or no

Right here the choice tree considers the variety of seat parameters to be larger than 6 as the client prefers an SUV and prefers a automobile with a sunroof. The tree would supply the best worth for the mannequin that satisfies each the factors and would fee it lesser if both of the parameters will not be met and fee it lowest if each the parameters are No. Allow us to see an illustration of the identical under:

One other resolution tree may very well be checking for options comparable to High quality of Stereo, Consolation of Seats and Sunroof availability and resolve sure or no. This might additionally fee the mannequin based mostly on the end result of those parameters and resolve sure or no relying upon the factors met. The identical has been illustrated under.

One other resolution tree may very well be checking for options comparable to Variety of Seats, Consolation of Seats, Gasoline Effectivity and Sunroof availability and resolve sure or no. The choice Tree for a similar is given under.

Every of the choice Tree might offer you a Sure or No based mostly on the information set. Every of the timber are impartial and our resolution utilizing a call tree would purely rely upon the options that specific tree appears upon. If a call tree considers all of the options, the depth of the tree would hold growing inflicting an over match mannequin.

A extra environment friendly means can be to mix these resolution Bushes and create an final Determination maker based mostly on the output from every tree. That may be a random forest

As soon as we obtain the output from each resolution tree, we use the bulk vote taken to reach on the resolution. To make use of this as a regression mannequin, we’d take a median of the values.

Allow us to see how a random forest would search for the above state of affairs.

The information for every tree is chosen utilizing a technique referred to as bagging which selects a random set of knowledge factors from the information set for every tree. The information chosen can be utilized once more (with alternative) or saved apart (with out alternative). Every tree would randomly decide the options based mostly on the subset of Information supplied. This randomness supplies the opportunity of discovering the characteristic significance, the characteristic that influences within the majority of the choice timber can be the characteristic of most significance.

Now as soon as the timber are constructed with a subset of knowledge and their very own set of options, every tree would independently execute to offer its resolution. This resolution will probably be a sure or No within the case of classification.

There’ll then be an ensemble of the timber created utilizing strategies comparable to stacking that will assist scale back classification errors. The ultimate output is set by the max vote methodology for classification.

Allow us to see an illustration of the identical under.

Every of the choice tree would independently resolve based mostly by itself subset of knowledge and options, so the outcomes wouldn’t be comparable. Assuming the Determination Tree1 suggests ‘Purchase’, Determination Tree 2 Suggests ‘Don’t Purchase’ and Determination Tree 3 suggests ‘Purchase’, then the max vote can be for Purchase and the consequence from Random Forest can be to ‘Purchase’

Every tree would have 3 main nodes

- Root Node

- Leaf Node

- Determination Node

The node the place the ultimate resolution is made known as ‘Leaf Node ‘, The perform to resolve is made within the ‘Determination Node’, the ‘Root Node’ is the place the information is saved.

Please observe that the options chosen will probably be random and should repeat throughout timber, this will increase the effectivity and compensates for lacking information. Whereas splitting a node, solely a subset of options is considered and the perfect characteristic amongst this subset is used for splitting, this variety ends in a greater effectivity.

Once we create a Random forest Machine Studying mannequin, the choice timber are created based mostly on random subset of options and the timber are break up additional and additional. The entropy or the knowledge gained is a crucial parameter used to resolve the tree break up. When the branches are created, whole entropy of the subbranches must be lower than the entropy of the Dad or mum Node. If the entropy drops, data gained additionally drops, which is a criterion used to cease additional break up of the tree. You possibly can study extra with the assistance of a random forest machine studying course.

How does it differ from the Determination Tree?

A call tree presents a single path and considers all of the options directly. So, this may occasionally create deeper timber making the mannequin over match. A Random forest creates a number of timber with random options, the timber usually are not very deep.

Offering an possibility of Ensemble of the choice timber additionally maximizes the effectivity because it averages the consequence, offering generalized outcomes.

Whereas a call tree construction largely relies on the coaching information and should change drastically even for a slight change within the coaching information, the random choice of options supplies little deviation when it comes to construction change with change in information. With the addition of Approach comparable to Bagging for choice of information, this may be additional minimized.

Having stated that, the storage and computational capacities required are extra for Random Forests than a call tree.

In abstract, Random Forest supplies a lot better accuracy and effectivity than a call tree, this comes at a price of storage and computational energy.

Let’s Regularize by way of Hyperparameters

Hyper parameters assist us to have a sure diploma of management over the mannequin to make sure higher effectivity, among the generally tuned hyperparameters are under.

N_estimators = This parameter helps us to find out the variety of Bushes within the Forest, greater the quantity, we create a extra strong combination mannequin, however that will price extra computational energy.

max_depth = This parameter restricts the variety of ranges of every tree. Creating extra ranges will increase the opportunity of contemplating extra options in every tree. A deep tree would create an overfit mannequin, however in Random forest this might be overcome as we’d ensemble on the finish.

max_features -This parameter helps us prohibit the utmost variety of options to be thought-about at each tree. This is likely one of the very important parameters in deciding the effectivity. Typically, a Grid search with CV can be carried out with numerous values for this parameter to reach on the splendid worth.

bootstrap = This might assist us resolve the strategy used for sampling information factors, ought to or not it’s with or with out alternative.

max_samples – This decides the share of knowledge that must be used from the coaching information for coaching. This parameter is usually not touched, because the samples that aren’t used for coaching (out of bag information) can be utilized for evaluating the forest and it’s most well-liked to make use of your entire coaching information set for coaching the forest.

Actual World Random Forests

Being a Machine Studying mannequin that can be utilized for each classification and Prediction, mixed with good effectivity, this can be a widespread mannequin in numerous arenas.

Random Forest will be utilized to any information set with multi-dimensions, so it’s a widespread alternative in relation to figuring out buyer loyalty in Retail, predicting inventory costs in Finance, recommending merchandise to prospects even figuring out the precise composition of chemical substances within the Manufacturing trade.

With its capacity to do each prediction and classification, it produces higher effectivity than many of the classical fashions in many of the arenas.

Actual-Time Use circumstances

Random Forest has been the go-to Mannequin for Worth Prediction, Fraud Detection in Monetary statements, Numerous Analysis papers revealed in these areas suggest Random Forest as the perfect accuracy producing mannequin. (Ref1, 2)

Random Forest Mannequin has proved to offer good accuracy in predicting illness based mostly on the options (Ref-3)

The Random Forest mannequin has been used to detect Parkinson-related lesions inside the midbrain in 3D transcranial ultrasound. This was developed by coaching the mannequin to know the organ association, dimension, form from prior information and the leaf nodes predict the organ class and spatial location. With this, it supplies improved class predictability (Ref 4)

Furthermore, a random forest approach has the potential to focus each on observations and variables of coaching information for growing particular person resolution timber and take most voting for classification and the overall common for regression issues respectively. It additionally makes use of a bagging approach that takes observations in a random method and selects all columns that are incapable of representing vital variables on the root for all resolution timber. On this method, a random forest makes timber solely that are depending on one another by penalising accuracy. We now have a thumb rule which will be applied for choosing sub-samples from observations utilizing random forest. If we take into account 2/3 of observations for coaching information and p be the variety of columns then

- For classification, we take sqrt(p) variety of columns

- For regression, we take p/3 variety of columns.

The above thumb rule will be tuned in case you want growing the accuracy of the mannequin.

Allow us to interpret each bagging and random forest approach the place we draw two samples, one in blue and one other in pink.

From the above diagram, we are able to see that the Bagging approach has chosen a number of observations however all columns. Alternatively, Random Forest chosen a number of observations and some columns to create uncorrelated particular person timber.

A pattern concept of a random forest classifier is given under

The above diagram offers us an concept of how every tree has grown and the variation of the depth of timber as per pattern chosen however ultimately course of, voting is carried out for remaining classification. Additionally, averaging is carried out after we take care of the regression downside.

Classifier Vs. Regressor

A random forest classifier works with information having discrete labels or higher referred to as class.

Instance- A affected person is affected by most cancers or not, an individual is eligible for a mortgage or not, and many others.

A random forest regressor works with information having a numeric or steady output and so they can’t be outlined by lessons.

Instance- the worth of homes, milk manufacturing of cows, the gross revenue of firms, and many others.

Benefits and Disadvantages of Random Forest

- It reduces overfitting in resolution timber and helps to enhance the accuracy

- It’s versatile to each classification and regression issues

- It really works nicely with each categorical and steady values

- It automates lacking values current within the information

- Normalising of knowledge will not be required because it makes use of a rule-based strategy.

Nonetheless, regardless of these benefits, a random forest algorithm additionally has some drawbacks.

- It requires a lot computational energy in addition to assets because it builds quite a few timber to mix their outputs.

- It additionally requires a lot time for coaching because it combines lots of resolution timber to find out the category.

- As a result of ensemble of resolution timber, it additionally suffers interpretability and fails to find out the importance of every variable.

Purposes of Random Forest

Banking Sector

Banking evaluation requires lots of effort because it comprises a excessive danger of revenue and loss. Buyer evaluation is likely one of the most used research adopted in banking sectors. Issues comparable to mortgage default probability of a buyer or for detecting any fraud transaction, random forest generally is a nice alternative.

The above illustration is a tree which decides whether or not a buyer is eligible for mortgage credit score based mostly on situations comparable to account steadiness, length of credit score, fee standing, and many others.

Healthcare Sectors

In pharmaceutical industries, random forest can be utilized to determine the potential of a sure medication or the composition of chemical substances required for medicines. It may also be utilized in hospitals to determine the ailments suffered by a affected person, danger of most cancers in a affected person, and plenty of different ailments the place early evaluation and analysis play an important function.

Credit score Card Fraud Detection

Making use of Random Forest with Python and R

We’ll carry out case research in Python and R for each Random forest regression and Classification strategies.

Random Forest Regression in Python

For regression, we will probably be coping with information which comprises salaries of staff based mostly on their place. We’ll use this to foretell the wage of an worker based mostly on his place.

Allow us to handle the libraries and the information:

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

df = pd.read_csv(‘Salaries.csv')

df.head()

X =df.iloc[:, 1:2].values

y =df.iloc[:, 2].values

Because the dataset may be very small we gained’t carry out any splitting. We’ll proceed on to becoming the information.

from sklearn.ensemble import RandomForestRegressor

mannequin = RandomForestRegressor(n_estimators = 10, random_state = 0)

mannequin.match(X, y)

Did you discover that we have now made simply 10 timber by placing n_estimators=10? It’s as much as you to mess around with the variety of timber. As it’s a small dataset, 10 timber are sufficient.

Now we’ll predict the wage of an individual who has a degree of 6.5

y_pred =mannequin.predict([[6.5]])

After prediction, we are able to see that the worker should get a wage of 167000 after reaching a degree of 6.5. Allow us to visualise to interpret it in a greater means.

X_grid_data = np.arange(min(X), max(X), 0.01)

X_grid_data = X_grid.reshape((len(X_grid_data), 1))

plt.scatter(X, y, shade="pink")

plt.plot(X_grid_data,mannequin.predict(X_grid_data), shade="blue")

plt.title('Random Forest Regression’)

plt.xlabel('Place')

plt.ylabel('Wage')

plt.present()

Random Forest Regression in R

Now we will probably be doing the identical mannequin in R and see the way it creates an impression in prediction

We’ll first import the dataset:

df = learn.csv('Position_Salaries.csv')

df = df[2:3]

In R too, we gained’t carry out splitting as the information is just too small. We’ll use your entire information for coaching and make a person prediction as we did in Python

We’ll use the ‘randomForest’ library. In case you didn’t set up the bundle, the under code will aid you out.

set up.packages('randomForest')

library(randomForest)

set.seed(1234)The seed perform will aid you get the identical consequence that we received throughout coaching and testing.

mannequin= randomForest(x = df[-2],

y = df$Wage,

ntree = 500)Now we’ll predict the wage of a degree 6.5 worker and see how a lot it differs from the one predicted utilizing Python.

y_prediction = predict(mannequin, information.body(Degree = 6.5))As we see, the prediction offers a wage of 160908 however in Python, we received a prediction of 167000. It utterly relies on the information analyst to resolve which algorithm works higher. We’re performed with the prediction. Now it’s time to visualise the information

set up.packages('ggplot2')

library(ggplot2)

x_grid_data = seq(min(df$Degree), max(df$Degree), 0.01)

ggplot()+geom_point(aes(x = df$Degree, y = df$Wage),color="pink") +geom_line(aes(x = x_grid_data, y = predict(mannequin, newdata = information.body(Degree = x_grid_data))),color="blue") +ggtitle('Reality or Bluff (Random Forest Regression)') + xlab('Degree') + ylab('Wage')

So that is for regression utilizing R. Now allow us to shortly transfer to the classification half to see how Random Forest works.

Random Forest Classifier in Python

For classification, we’ll use Social Networking Adverts information which comprises details about the product bought based mostly on age and wage of an individual. Allow us to import the libraries

import numpy as np

import matplotlib.pyplot as plt

import pandas as pdNow allow us to see the dataset:

df = pd.read_csv('Social_Network_Ads.csv')

df

On your data, the dataset comprises 400 rows and 5 columns.

X = df.iloc[:, [2, 3]].values

y = df.iloc[:, 4].values

Now we’ll break up the information for coaching and testing. We’ll take 75% for coaching and relaxation for testing.

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = 0.25, random_state = 0)

Now we’ll standardise the information utilizing StandardScaler from sklearn library.

from sklearn.preprocessing import StandardScaler

sc = StandardScaler()

X_train = sc.fit_transform(X_train)

X_test = sc.rework(X_test)

After scaling, allow us to see the top of the information now.

Now it’s time to suit our mannequin.

from sklearn.ensemble import RandomForestClassifier

mannequin = RandomForestClassifier(n_estimators = 10, criterion = 'entropy', random_state = 0)

mannequin.match(X_train, y_train)We now have made 10 timber and used criterion as ‘entropy ’ as it’s used to lower the impurity within the information. You possibly can improve the variety of timber if you want however we’re preserving it restricted to 10 for now.

Now the becoming is over. We’ll predict the check information.

y_prediction = mannequin.predict(X_test)After prediction, we are able to consider by confusion matrix and see how good our mannequin performs.

from sklearn.metrics import confusion_matrix

conf_mat = confusion_matrix(y_test, y_prediction)

Nice. As we see, our mannequin is doing nicely as the speed of misclassification may be very much less which is fascinating. Now allow us to visualise our coaching consequence.

from matplotlib.colours import ListedColormap

X_set, y_set = X_train, y_train

X1, X2 = np.meshgrid(np.arange(begin = X_set[:, 0].min() - 1, cease = X_set[:, 0].max() + 1, step = 0.01),np.arange(begin = X_set[:, 1].min() - 1, cease = X_set[:, 1].max() + 1, step = 0.01))

plt.contourf(X1,X2,mannequin.predict(np.array([X1.ravel(), X2.ravel()]).T).reshape(X1.form),alpha = 0.75, cmap = ListedColormap(('pink', 'inexperienced')))

plt.xlim(X1.min(), X1.max())

plt.ylim(X2.min(), X2.max())

for i, j in enumerate(np.distinctive(y_set)):

plt.scatter(X_set[y_set == j, 0], X_set[y_set == j, 1],

c = ListedColormap(('pink', 'inexperienced'))(i), label = j)

plt.title('Random Forest Classification (Coaching set)')

plt.xlabel('Age')

plt.ylabel('Wage')

plt.legend()

plt.present()

Now allow us to visualise check end in the identical means.

from matplotlib.colours import ListedColormap

X_set, y_set = X_test, y_test

X1, X2 = np.meshgrid(np.arange(begin = X_set[:, 0].min() - 1, cease = X_set[:, 0].max() + 1, step = 0.01),np.arange(begin = X_set[:, 1].min() - 1, cease = X_set[:, 1].max() + 1, step = 0.01))

plt.contourf(X1,X2,mannequin.predict(np.array([X1.ravel(), X2.ravel()]).T).reshape(X1.form),alpha=0.75,cmap= ListedColormap(('pink', 'inexperienced')))

plt.xlim(X1.min(), X1.max())

plt.ylim(X2.min(), X2.max())

for i, j in enumerate(np.distinctive(y_set)):

plt.scatter(X_set[y_set == j, 0], X_set[y_set == j, 1],

c = ListedColormap(('pink', 'inexperienced'))(i), label = j)

plt.title('Random Forest Classification (Check set)')

plt.xlabel('Age')

plt.ylabel('Estimated Wage')

plt.legend()

plt.present()

In order that’s for now. We’ll transfer to carry out the identical mannequin in R.

Random Forest Classifier in R

Allow us to import the dataset and test the top of the information

df = learn.csv('SocialNetwork_Ads.csv')

df = df[3:5]Now in R, we have to change the category to issue. So we want additional encoding.

df$Bought = issue(df$Bought, ranges = c(0, 1))Now we’ll break up the information and see the consequence. The splitting ratio would be the identical as we did in Python.

set up.packages('caTools')

library(caTools)

set.seed(123)

split_data = pattern.break up(df$Bought, SplitRatio = 0.75)

training_set = subset(df, split_data == TRUE)

test_set = subset(df, split_data == FALSE)Additionally, we’ll carry out the standardisation of the information and see the way it performs whereas testing.

training_set[-3] = scale(training_set[-3])

test_set[-3] = scale(test_set[-3])Now we match the mannequin utilizing the built-in library ‘randomForest’ supplied by R.

set up.packages('randomForest')

library(randomForest)

set.seed(123)

mannequin= randomForest(x = training_set[-3],

y = training_set$Bought,

ntree = 10)We set the variety of timber to 10 to see the way it performs. We are able to set any variety of timber to enhance accuracy.

y_prediction = predict(mannequin, newdata = test_set[-3])Now the prediction is over and we’ll consider utilizing a confusion matrix.

conf_mat = desk(test_set[, 3], y_prediction)

conf_mat

As we see the mannequin underperforms in comparison with Python as the speed of misclassification is excessive.

Now allow us to interpret our consequence utilizing visualisation. We will probably be utilizing ElemStatLearn methodology for easy visualisation.

library(ElemStatLearn)

train_set = training_set

X1 = seq(min(train_set [, 1]) - 1, max(train_set [, 1]) + 1, by = 0.01)

X2 = seq(min(train_set [, 2]) - 1, max(train_set [, 2]) + 1, by = 0.01)

grid_set = broaden.grid(X1, X2)

colnames(grid_set) = c('Age', 'EstimatedSalary')

y_grid = predict(mannequin, grid_set)

plot(set[, -3],

foremost = 'Random Forest Classification (Coaching set)',

xlab = 'Age', ylab = 'Estimated Wage',

xlim = vary(X1), ylim = vary(X2))

contour(X1, X2, matrix(as.numeric(y_grid), size(X1), size(X2)), add = TRUE)

factors(grid_set, pch=".", col = ifelse(y_grid == 1, 'springgreen3', 'tomato'))

factors(train_set, pch = 21, bg = ifelse(train_set [, 3] == 1, 'green4', 'red3'))The mannequin works effective as it’s evident from the visualisation of coaching information. Now allow us to see the way it performs with the check information.

library(ElemStatLearn)

testset = test_set

X1 = seq(min(testset [, 1]) - 1, max(testset [, 1]) + 1, by = 0.01)

X2 = seq(min(testset [, 2]) - 1, max testset [, 2]) + 1, by = 0.01)

grid_set = broaden.grid(X1, X2)

colnames(grid_set) = c('Age', 'EstimatedSalary')

y_grid = predict(mannequin, grid_set)

plot(set[, -3], foremost = 'Random Forest Classification (Check set)',

xlab = 'Age', ylab = 'Estimated Wage',

xlim = vary(X1), ylim = vary(X2))

contour(X1, X2, matrix(as.numeric(y_grid), size(X1), size(X2)), add = TRUE)

factors(grid_set, pch=".", col = ifelse(y_grid == 1, 'springgreen3', 'tomato'))

factors(testset, pch = 21, bg = ifelse(testset [, 3] == 1, 'green4', 'red3'))That’s it for now. The check information simply labored effective as anticipated.

Inference

Random Forest works nicely after we are attempting to keep away from overfitting from constructing a call tree. Additionally, it really works effective when the information principally comprise categorical variables. Different algorithms like logistic regression can outperform in relation to numeric variables however in relation to making a call based mostly on situations, the random forest is the only option. It utterly relies on the analyst to mess around with the parameters to enhance accuracy. There may be typically much less probability of overfitting because it makes use of a rule-based strategy. However but once more, it relies on the information and the analyst to decide on the perfect algorithm. Random Forest is a extremely popular Machine Studying Mannequin because it supplies good effectivity, the choice making used is similar to human considering. The power to know the characteristic significance helps us clarify to the mannequin although it’s extra of a black-box mannequin. The effectivity supplied and nearly unattainable to overfit are the good benefits of this mannequin. This may actually be utilized in any trade and the analysis papers revealed are proof of the efficacy of this easy but nice mannequin.

When you want to study extra in regards to the Random Forest or different Machine Studying algorithms, upskill with Nice Studying’s PG Program in Machine Studying.