Head over to our on-demand library to view classes from VB Remodel 2023. Register Right here

Everyone knows enterprises are racing at various speeds to research and reap the advantages of generative AI — ideally in a wise, safe and cost-effective manner. Survey after survey during the last 12 months has proven this to be true.

However as soon as a company identifies a big language mannequin (LLM) or a number of that it needs to make use of, the arduous work is much from over. Actually, deploying the LLM in a manner that advantages a company requires understanding the finest prompts workers or prospects can use to generate useful outcomes — in any other case it’s just about nugatory — in addition to what knowledge to incorporate in these prompts from the group or consumer.

“You may’t simply take a Twitter demo [of an LLM] and put it into the actual world,” Aparna Dhinakaran, cofounder and chief product officer of Arize AI, stated in an unique video interview with VentureBeat. “It’s truly going to fail. And so how have you learnt the place it fails? And the way have you learnt what to enhance? That’s what we concentrate on.”

Introducing ‘Immediate Playground’

Three-year-old business-to-business (B2) machine studying (ML) software program supplier Arize AI would know, because it has since day one been targeted on making AI extra observable (much less technical and extra comprehensible) to organizations.

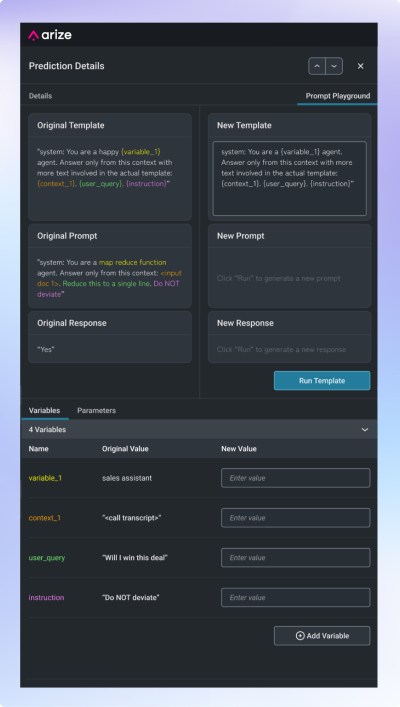

Immediately, the VB Remodel award-winning firm introduced at Google’s Cloud Subsequent 23 convention industry-first capabilities for optimizing the efficiency of LLMs deployed by enterprises, together with a brand new “Immediate Playground” for choosing between and iterating on saved prompts designed for enterprises, and a brand new retrieval augmented era (RAG) workflow to assist organizations perceive what knowledge of theirs could be useful to incorporate in an LLMs responses.

Nearly a 12 months in the past, Arize debuted its preliminary platform within the Google Cloud Marketplace. Now it’s augmenting its presence there with these highly effective new options for its enterprise prospects.

Immediate Playground and new workflows

Arize’s new immediate engineering workflows, together with Immediate Playground, allow groups to uncover poorly performing immediate templates, iterate on them in actual time and confirm improved LLM outputs earlier than deployment.

Immediate evaluation is a vital however typically ignored a part of troubleshooting an LLM’s efficiency, which may merely be boosted by testing totally different immediate templates or iterating on one for higher responses.

With these new workflows, groups can simply:

- Uncover responses with poor consumer suggestions or analysis scores

- Establish the underlying immediate template related to poor responses

- Iterate on the prevailing immediate template to enhance protection of edge circumstances

- Evaluate responses throughout immediate templates within the Immediate Playground previous to implementation

As Dhinakaran defined, immediate engineering is totally key to staying aggressive with LLMs out there right now. The corporate’s new immediate evaluation and iteration workflows assist groups guarantee their prompts cowl crucial use circumstances and potential edge eventualities that will give you actual customers.

“You’ve bought to make it possible for the immediate you’re placing into your mannequin is fairly rattling good to remain aggressive,” stated Dhinakaran. “What we launched helps groups engineer higher prompts for higher efficiency. That’s so simple as it’s: We enable you to concentrate on ensuring that that immediate is performant and covers all of those circumstances that you just want it to deal with.”

Understanding non-public knowledge

For instance, prompts for an training LLM chatbot want to make sure no inappropriate responses, whereas customer support prompts ought to cowl potential edge circumstances and nuances round providers supplied or not supplied.

Arize can be offering the {industry}’s first insights into the non-public or contextual knowledge that influences LLM outputs — what Dhinakaran referred to as the “secret sauce” corporations present. The corporate uniquely analyzes embeddings to judge the relevance of personal knowledge fused into prompts.

“What we rolled out is a manner for AI groups to now monitor, take a look at their prompts, make it higher after which particularly perceive the non-public knowledge that’s now being put into these these prompts, as a result of the non-public knowledge half is sensible,” Dhinakaran stated.

Dhinakaran instructed VentureBeat that enterprises can deploy its options on premises for safety causes, and that they’re SOC-2 compliant.

The significance of personal organizational knowledge

These new capabilities allow examination of whether or not the appropriate context is current in prompts to deal with actual consumer queries. Groups can establish areas the place they might want so as to add extra content material round frequent questions missing protection within the present data base.

“Nobody else out there may be actually specializing in troubleshooting this non-public knowledge, which is admittedly like the key sauce that corporations need to affect the immediate,” Dhinakaran famous.

Arize additionally launched complementary workflows utilizing search and retrieval to assist groups troubleshoot points stemming from the retrieval part of RAG fashions.

These workflows will empower groups to pinpoint the place they might want so as to add extra context into their data base, establish circumstances the place retrieval didn’t floor probably the most related data, and in the end perceive why their LLM could have hallucinated or generated suboptimal responses.

Understanding context and relevance — and the place they’re missing

Dhinakaran gave an instance of how Arize seems to be at question and data base embeddings to uncover irrelevant retrieved paperwork that will have led to a defective response.

“You may click on on, let’s say, a consumer query in our product, and it’ll present you the entire related paperwork that it may have pulled, and which one it did lastly pull to truly use within the response,” Dhinakaran defined. Then “you possibly can see the place the mannequin could have hallucinated or supplied suboptimal responses primarily based on deficiencies within the data base.”

This end-to-end observability and troubleshooting of prompts, non-public knowledge and retrieval is designed to assist groups optimize LLMs responsibly after preliminary deployment, when fashions invariably wrestle to deal with real-world variability.

Dhinakaran summarized Arize’s focus: “We’re not only a day one answer; we enable you to truly ongoing get it to work.”

The corporate goals to supply the monitoring and debugging capabilities organizations are lacking, to allow them to repeatedly enhance their LLMs post-deployment. This enables them to maneuver previous theoretical worth to real-world impression throughout industries.