Giant Language Fashions (LLMs) like GPT-3 and ChatGPT have revolutionized AI by providing Pure Language Understanding and content material technology capabilities. However their improvement comes at a hefty worth limiting accessibility and additional analysis. Researchers estimate that coaching GPT-3 price OpenAI round $5 million. Nonetheless, Microsoft acknowledged the potential and invested $1 billion in 2019 and $10 billion in 2023 in OpenAI’s GPT-3 and ChatGPT enterprise.

LLMs are machine studying fashions educated on intensive textual information for NLP functions. They’re based mostly on transformer structure and make the most of consideration mechanisms for NLP duties like question-answering, machine translation, sentiment evaluation, and many others.

The query arises: can the effectivity of those massive fashions be elevated whereas concurrently lowering computational price and coaching time?

A number of approaches, like Progressive Neural Networks, Network Morphism, intra-layer model parallelism, knowledge inheritance, and many others., have been developed to cut back the computational price of coaching neural networks. The novel LiGO (Linear Progress Operator) method we’ll talk about is setting a brand new benchmark. It halves the computational price of coaching LLMs.

Earlier than discussing this system, analyzing the components contributing to the excessive worth of constructing LLMs is important.

Price of Constructing Giant Language Fashions

Three main bills for creating LLMs are as follows:

1. Computational Sources

Constructing LLMs require large computational assets to coach on massive datasets. They need to course of billions of parameters and study complicated patterns from large textual information.

Funding in specialised {hardware} resembling Graphics Processing Items (GPUs) and Tensor Processing Items (TPUs) is required for constructing and coaching LLMs to attain state-of-the-art efficiency.

As an example, GPT-3 was educated on a supercomputer with 10000 enterprise-grade GPUs (H100 and A100) and 285,000 CPU cores.

2. Vitality Consumption

The intensive computational assets required for constructing LLMs lead to important vitality consumption. As an example, coaching 175 billion parameters GPT-3 took 14.8 days utilizing 10,000 V100 GPUs, equal to three.55 million GPU hours. Such a excessive degree of vitality consumption has important environmental results as nicely.

3. Knowledge Storage & Administration

LLMs are educated on massive datasets. As an example, GPT-3 was educated on an unlimited corpus of textual data, together with Frequent Crawl, WebText2, Books1, Books2, and Wikipedia, amongst different sources. Important infrastructure funding is required to gather, curate and retailer these datasets.

Additionally, cloud storage is required for information storage, and human experience for information preprocessing and model management. Furthermore, making certain that your information technique complies with rules like GDPR additionally provides to the fee.

LiGO Method: Scale back the Price of Constructing Giant Language Fashions to Half

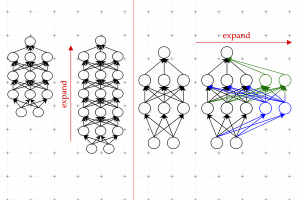

LiGO (Linear Progress Operator) is a novel method developed by researchers at MIT to cut back the computational price of coaching LLMs by 50%. The strategy includes initializing the weights of bigger fashions from these of smaller pre-trained fashions, enabling environment friendly scaling of neural networks.

Picture from the Paper: Learning to Grow Pretrained Models For Efficient Transformer Training

Yoon Kim, the senior writer of the paper, says:

“It’s been estimated that coaching fashions on the scale of what ChatGPT is hypothesized to run on might take hundreds of thousands of {dollars} only for a single coaching run. Can we enhance the effectivity of those coaching strategies, so we are able to nonetheless get good fashions in much less time and for much less cash? We suggest to do that by leveraging smaller language fashions which have beforehand been educated.”

This technique maintains the efficiency advantages of bigger fashions with decreased computational price and coaching time in comparison with coaching a big mannequin from scratch. LiGO makes use of a data-driven linear development operator that mixes depth and width operators for optimum efficiency.

The paper utilized numerous datasets to conduct text-based experiments, together with the English Wikipedia corpus for coaching BERT and RoBERTa fashions and the C4 dataset for coaching GPT2.

The LiGO method experimentation included rising BERT-Small to BERT-Base, BERT-Base to BERT-Giant, RoBERTaSmall to RoBERTa-Base, GPT2-Base to GPT2-Medium, and CaiT-XS to CaiT-S.

The researchers in contrast their method with a number of different baselines, together with coaching from scratch, progressive coaching, bert2BERT, and KI.

LiGO method provided 44.7% financial savings in FLOPs (floating-point operations per second) and 40.7% financial savings in wall time in comparison with coaching BERT-Base from scratch by reusing the BERT-Small mannequin. LiGO development operator outperforms StackBERT, MSLT, bert2BERT, and KI in environment friendly coaching.

Advantages of Utilizing a Coaching Optimization Method Like LiGO

LiGO is an environment friendly neural community coaching technique that has numerous advantages listed as follows:

1. Sooner Coaching

As acknowledged earlier, sooner coaching is the primary benefit of the LiGO method. It trains LLMs in half the time, growing productiveness and lowering prices.

2. Useful resource Environment friendly

LiGO is resource-efficient because it minimizes wall time and FLOPs, resulting in a less expensive and eco-friendly method to coaching massive transformer fashions.

3. Generalization

The LiGO method has improved the efficiency of each language and imaginative and prescient transformers suggesting that it’s a generalizable method that may be utilized to numerous duties.

Constructing business AI merchandise is only one side of the general bills related to AI techniques. One other significant factor of prices comes from day by day operations. As an example, it prices OpenAI about $700,000 day by day to reply queries utilizing ChatGPT. Researchers are anticipated to proceed exploring approaches that make LLMs cost-effective throughout coaching and extra accessible on runtime.

For extra AI-related content material, go to unite.ai.