Machine Studying issues cope with a substantial amount of information and rely closely on the algorithms which might be used to coach the mannequin. There are numerous approaches and algorithms to coach a machine studying mannequin primarily based on the issue at hand. Supervised and unsupervised studying are the 2 most outstanding of those approaches. An essential real-life drawback of selling a services or products to a selected target market could be simply resolved with the assistance of a type of unsupervised studying often called Clustering. This text will clarify clustering algorithms together with real-life issues and examples. Allow us to begin with understanding what clustering is.

What are Clusters?

The phrase cluster is derived from an outdated English phrase, ‘clyster, ‘ which means a bunch. A cluster is a gaggle of comparable issues or individuals positioned or occurring carefully collectively. Normally, all factors in a cluster depict related traits; subsequently, machine studying could possibly be used to establish traits and segregate these clusters. This makes the idea of many functions of machine studying that remedy information issues throughout industries.

What’s Clustering?

Because the identify suggests, clustering includes dividing information factors into a number of clusters of comparable values. In different phrases, the target of clustering is to segregate teams with related traits and bundle them collectively into totally different clusters. It’s ideally the implementation of human cognitive functionality in machines enabling them to acknowledge totally different objects and differentiate between them primarily based on their pure properties. In contrast to people, it is vitally troublesome for a machine to establish an apple or an orange except correctly skilled on an enormous related dataset. Unsupervised studying algorithms obtain this coaching, particularly clustering.

Merely put, clusters are the gathering of knowledge factors which have related values or attributes and clustering algorithms are the strategies to group related information factors into totally different clusters primarily based on their values or attributes.

For instance, the info factors clustered collectively could be thought-about as one group or cluster. Therefore the diagram beneath has two clusters (differentiated by colour for illustration).

Why Clustering?

If you end up working with massive datasets, an environment friendly method to analyze them is to first divide the info into logical groupings, aka clusters. This fashion, you can extract worth from a big set of unstructured information. It lets you look by the info to drag out some patterns or buildings earlier than going deeper into analyzing the info for particular findings.

Organizing information into clusters helps establish the info’s underlying construction and finds functions throughout industries. For instance, clustering could possibly be used to categorise illnesses within the area of medical science and can be utilized in buyer classification in advertising and marketing analysis.

In some functions, information partitioning is the ultimate purpose. Then again, clustering can also be a prerequisite to getting ready for different synthetic intelligence or machine studying issues. It’s an environment friendly approach for information discovery in information within the type of recurring patterns, underlying guidelines, and extra. Attempt to be taught extra about clustering on this free course: Buyer Segmentation utilizing Clustering

Forms of Clustering Strategies/ Algorithms

Given the subjective nature of the clustering duties, there are numerous algorithms that go well with several types of clustering issues. Every drawback has a unique algorithm that outline similarity amongst two information factors, therefore it requires an algorithm that most closely fits the target of clustering. Right now, there are greater than 100 recognized machine studying algorithms for clustering.

Just a few Forms of Clustering Algorithms

Because the identify signifies, connectivity fashions are likely to classify information factors primarily based on their closeness of knowledge factors. It’s primarily based on the notion that the info factors nearer to one another depict extra related traits in comparison with these positioned farther away. The algorithm helps an intensive hierarchy of clusters which may merge with one another at sure factors. It’s not restricted to a single partitioning of the dataset.

The selection of distance operate is subjective and should range with every clustering software. There are additionally two totally different approaches to addressing a clustering drawback with connectivity fashions. First is the place all information factors are labeled into separate clusters after which aggregated as the gap decreases. The second method is the place the entire dataset is assessed as one cluster after which partitioned into a number of clusters as the gap will increase. Regardless that the mannequin is definitely interpretable, it lacks the scalability to course of larger datasets.

Distribution fashions are primarily based on the likelihood of all information factors in a cluster belonging to the identical distribution, i.e., Regular distribution or Gaussian distribution. The slight downside is that the mannequin is very susceptible to affected by overfitting. A well known instance of this mannequin is the expectation-maximization algorithm.

These fashions search the info house for various densities of knowledge factors and isolate the totally different density areas. It then assigns the info factors inside the similar area as clusters. DBSCAN and OPTICS are the 2 commonest examples of density fashions.

Centroid fashions are iterative clustering algorithms the place similarity between information factors is derived primarily based on their closeness to the cluster’s centroid. The centroid (middle of the cluster) is fashioned to make sure that the gap of the info factors is minimal from the middle. The answer for such clustering issues is normally approximated over a number of trials. An instance of centroid fashions is the Ok-means algorithm.

Frequent Clustering Algorithms

Ok-Means Clustering

Ok-Means is by far the preferred clustering algorithm, on condition that it is vitally simple to grasp and apply to a variety of knowledge science and machine studying issues. Right here’s how one can apply the Ok-Means algorithm to your clustering drawback.

Step one is randomly deciding on quite a lot of clusters, every of which is represented by a variable ‘okay’. Subsequent, every cluster is assigned a centroid, i.e., the middle of that individual cluster. It is very important outline the centroids as far off from one another as potential to cut back variation. After all of the centroids are outlined, every information level is assigned to the cluster whose centroid is on the closest distance.

As soon as all information factors are assigned to respective clusters, the centroid is once more assigned for every cluster. As soon as once more, all information factors are rearranged in particular clusters primarily based on their distance from the newly outlined centroids. This course of is repeated till the centroids cease transferring from their positions.

Ok-Means algorithm works wonders in grouping new information. A number of the sensible functions of this algorithm are in sensor measurements, audio detection, and picture segmentation.

Allow us to take a look on the R implementation of Ok Means Clustering.

Ok Means clustering with ‘R’

- Having a look on the first few data of the dataset utilizing the pinnacle() operate

head(iris) ## Sepal.Size Sepal.Width Petal.Size Petal.Width Species ## 1 5.1 3.5 1.4 0.2 setosa ## 2 4.9 3.0 1.4 0.2 setosa ## 3 4.7 3.2 1.3 0.2 setosa ## 4 4.6 3.1 1.5 0.2 setosa ## 5 5.0 3.6 1.4 0.2 setosa ## 6 5.4 3.9 1.7 0.4 setosa

- Eradicating the specific column ‘Species’ as a result of k-means could be utilized solely on numerical columns

iris.new<- iris[,c(1,2,3,4)] head(iris.new) ## Sepal.Size Sepal.Width Petal.Size Petal.Width ## 1 5.1 3.5 1.4 0.2 ## 2 4.9 3.0 1.4 0.2 ## 3 4.7 3.2 1.3 0.2 ## 4 4.6 3.1 1.5 0.2 ## 5 5.0 3.6 1.4 0.2 ## 6 5.4 3.9 1.7 0.4

- Making a scree-plot to establish the best variety of clusters

totWss=rep(0,5)

for(okay in 1:5){

set.seed(100)

clust=kmeans(x=iris.new, facilities=okay, nstart=5)

totWss[k]=clust$tot.withinss

}

plot(c(1:5), totWss, kind="b", xlab="Variety of Clusters",

ylab="sum of 'Inside teams sum of squares'")

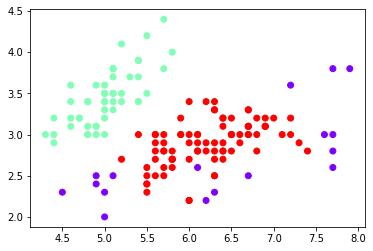

- Visualizing the clustering

library(cluster) library(fpc) ## Warning: package deal 'fpc' was constructed beneath R model 3.6.2 clus <- kmeans(iris.new, facilities=3) plotcluster(iris.new, clus$cluster)

clusplot(iris.new, clus$cluster, colour=TRUE,shade = T)

- Including the clusters to the unique dataset

iris.new<-cbind(iris.new,cluster=clus$cluster) head(iris.new) ## Sepal.Size Sepal.Width Petal.Size Petal.Width cluster ## 1 5.1 3.5 1.4 0.2 1 ## 2 4.9 3.0 1.4 0.2 1 ## 3 4.7 3.2 1.3 0.2 1 ## 4 4.6 3.1 1.5 0.2 1 ## 5 5.0 3.6 1.4 0.2 1 ## 6 5.4 3.9 1.7 0.4 1

Density-Based mostly Spatial Clustering of Functions With Noise (DBSCAN)

DBSCAN is the most typical density-based clustering algorithm and is broadly used. The algorithm picks an arbitrary place to begin, and the neighborhood so far is extracted utilizing a distance epsilon ‘ε’. All of the factors which might be inside the distance epsilon are the neighborhood factors. If these factors are ample in quantity, then the clustering course of begins, and we get our first cluster. If there should not sufficient neighboring information factors, then the primary level is labeled noise.

For every level on this first cluster, the neighboring information factors (the one which is inside the epsilon distance with the respective level) are additionally added to the identical cluster. The method is repeated for every level within the cluster till there are not any extra information factors that may be added.

As soon as we’re executed with the present cluster, an unvisited level is taken as the primary information level of the following cluster, and all neighboring factors are labeled into this cluster. This course of is repeated till all factors are marked ‘visited’.

DBSCAN has some benefits as in comparison with different clustering algorithms:

- It doesn’t require a pre-set variety of clusters

- Identifies outliers as noise

- Capacity to search out arbitrarily formed and sized clusters simply

Implementing DBSCAN with Python

from sklearn import datasets

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

from sklearn.cluster import DBSCAN

iris = datasets.load_iris()

x = iris.information[:, :4] # we solely take the primary two options.

DBSC = DBSCAN()

cluster_D = DBSC.fit_predict(x)

print(cluster_D)

plt.scatter(x[:,0],x[:,1],c=cluster_D,cmap='rainbow')[ 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 -1 0 0 0 0 0 0 0 0 1 1 1 1 1 1 1 -1 1 1 -1 1 1 1 1 1 1 1 -1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 -1 1 1 1 1 1 -1 1 1 1 1 -1 1 1 1 1 1 1 -1 -1 1 -1 -1 1 1 1 1 1 1 1 -1 -1 1 1 1 -1 1 1 1 1 1 1 1 1 -1 1 1 -1 -1 1 1 1 1 1 1 1 1 1 1 1 1 1 1]

<matplotlib.collections.PathCollection at 0x7f38b0c48160>

Hierarchical Clustering

Hierarchical Clustering is categorized into divisive and agglomerative clustering. Mainly, these algorithms have clusters sorted in an order primarily based on the hierarchy in information similarity observations.

Divisive Clustering, or the top-down method, teams all the info factors in a single cluster. Then it divides it into two clusters with the least similarity to one another. The method is repeated, and clusters are divided till there is no such thing as a extra scope for doing so.

Agglomerative Clustering, or the bottom-up method, assigns every information level as a cluster and aggregates probably the most related clusters. This primarily means bringing related information collectively right into a cluster.

Out of the 2 approaches, Divisive Clustering is extra correct. However then, it once more relies on the kind of drawback and the character of the obtainable dataset to resolve which method to use to a selected clustering drawback in Machine Studying.

Implementing Hierarchical Clustering with Python

#Import libraries

from sklearn import datasets

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

from sklearn.cluster import AgglomerativeClustering

#import the dataset

iris = datasets.load_iris()

x = iris.information[:, :4] # we solely take the primary two options.

hier_clustering = AgglomerativeClustering(3)

clusters_h = hier_clustering.fit_predict(x)

print(clusters_h )

plt.scatter(x[:,0],x[:,1],c=clusters_h ,cmap='rainbow')[1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 2 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 2 0 2 2 2 2 0 2 2 2 2 2 2 0 0 2 2 2 2 0 2 0 2 0 2 2 0 0 2 2 2 2 2 0 0 2 2 2 0 2 2 2 0 2 2 2 0 2 2 0]

<matplotlib.collections.PathCollection at 0x7f38b0bcbb00>

Functions of Clustering

Clustering has different functions throughout industries and is an efficient resolution to a plethora of machine studying issues.

- It’s utilized in market analysis to characterize and uncover a related buyer bases and audiences.

- Classifying totally different species of vegetation and animals with the assistance of picture recognition strategies

- It helps in deriving plant and animal taxonomies and classifies genes with related functionalities to realize perception into buildings inherent to populations.

- It’s relevant in metropolis planning to establish teams of homes and different amenities in response to their kind, worth, and geographic coordinates.

- It additionally identifies areas of comparable land use and classifies them as agricultural, industrial, industrial, residential, and so forth.

- Classifies paperwork on the internet for data discovery

- Applies nicely as a knowledge mining operate to realize insights into information distribution and observe traits of various clusters

- Identifies credit score and insurance coverage frauds when utilized in outlier detection functions

- Useful in figuring out high-risk zones by learning earthquake-affected areas (relevant for different pure hazards too)

- A easy software could possibly be in libraries to cluster books primarily based on the subjects, style, and different traits

- An essential software is into figuring out most cancers cells by classifying them in opposition to wholesome cells

- Search engines like google and yahoo present search outcomes primarily based on the closest related object to a search question utilizing clustering strategies

- Wi-fi networks use varied clustering algorithms to enhance vitality consumption and optimise information transmission

- Hashtags on social media additionally use clustering strategies to categorise all posts with the identical hashtag beneath one stream

On this article, we mentioned totally different clustering algorithms in Machine Studying. Whereas there’s a lot extra to unsupervised studying and machine studying as a complete, this text particularly attracts consideration to clustering algorithms in Machine Studying and their functions. If you wish to be taught extra about machine studying ideas, head to our weblog. Additionally, in case you want to pursue a profession in Machine Studying, then upskill with Nice Studying’s PG program in Machine Studying.