VentureBeat presents: AI Unleashed – An unique government occasion for enterprise knowledge leaders. Community and study with trade friends. Learn More

Mistral AI, the six-month-old Paris-based startup that made headlines with its distinctive Phrase Artwork emblem and a record-setting $118 million seed spherical — reportedly the largest seed in the history of Europe — at present launched its first massive language AI mannequin, Mistral 7B.

The 7.3 billion parameter mannequin outperforms greater choices, together with Meta’s Llama 2 13B (one of many smaller of Meta’s newer fashions), and is claimed to be probably the most highly effective language mannequin for its dimension (thus far).

It might deal with English duties whereas additionally delivering pure coding capabilities on the similar time – making an alternative choice for a number of enterprise-centric use instances.

Mistral stated it’s open-sourcing the brand new mannequin beneath the Apache 2.0 license, permitting anybody to fine-tune and use it wherever (regionally to cloud) with out restriction, together with for enterprise instances.

Meet Mistral 7B

Based earlier this 12 months by alums from Google’s DeepMind and Meta, Mistral AI is on a mission to “make AI helpful” for enterprises by tapping solely publicly obtainable knowledge and people contributed by prospects.

Now, with the discharge of Mistral 7B, the corporate is beginning this journey, offering groups with a small-sized mannequin able to low-latency textual content summarisation, classification, textual content completion and code completion.

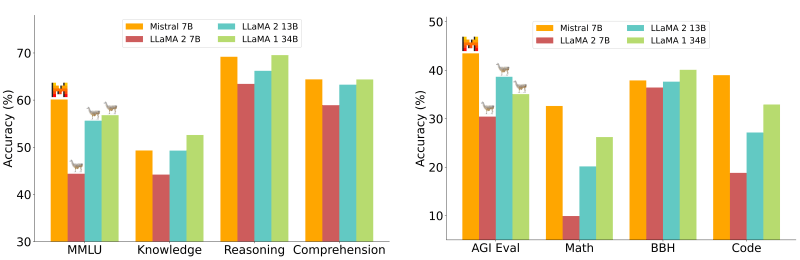

Whereas the mannequin has simply been introduced, Mistral AI claims to already greatest its open supply competitors. In benchmarks protecting a spread of duties, the mannequin was discovered to be outperforming Llama 2 7B and 13B fairly simply.

As an example, within the Large Multitask Language Understanding (MMLU) take a look at, which covers 57 topics throughout arithmetic, US historical past, laptop science, regulation and extra, the brand new mannequin delivered an accuracy of 60.1%, whereas Llama 2 7B and 13B delivered little over 44% and 55%, respectively.

Equally, in exams protecting commonsense reasoning and studying comprehension, Mistral 7B outperformed the 2 Llama fashions with an accuracy of 69% and 64%, respectively. The one space the place Llama 2 13B matched Mistral 7B was the world information take a look at, which Mistral claims is perhaps because of the mannequin’s restricted parameter rely, which restricts the quantity of information it could compress.

“For all metrics, all fashions have been re-evaluated with our analysis pipeline for correct comparability. Mistral 7B considerably outperforms Llama 2 13B on all metrics, and is on par with Llama 34B (on many benchmarks),” the corporate wrote in a weblog post.

As for coding duties, whereas Mistral calls the brand new mannequin “vastly superior,” benchmark outcomes present it nonetheless doesn’t outperform the finetuned CodeLlama 7B. The Meta mannequin delivered an accuracy of 31.1% and 52.5% in 0-shot Humaneval and 3-shot MBPP (hand-verified subset) exams, whereas Mistral 7B sat carefully behind with an accuracy of 30.5% and 47.5%, respectively.

Excessive-performing small mannequin may gain advantage companies

Whereas that is simply the beginning, Mistral’s demonstration of a small mannequin delivering excessive efficiency throughout a spread of duties may imply main advantages for companies.

For instance, in MMLU, Mistral 7B delivers the efficiency of a Llama 2 that might be greater than 3x its dimension (23 billion parameters). This might straight save reminiscence and supply value advantages – with out affecting ultimate outputs.

The corporate says it achieves sooner inference utilizing grouped-query attention (GQA) and handles longer sequences at a smaller value utilizing Sliding Window Consideration (SWA).

“Mistral 7B makes use of a sliding window consideration (SWA) mechanism, by which every layer attends to the earlier 4,096 hidden states. The principle enchancment, and motive for which this was initially investigated, is a linear compute value of O(sliding_window.seq_len). In observe, modifications made to FlashAttention and xFormers yield a 2x pace enchancment for a sequence size of 16k with a window of 4k,” the corporate wrote.

The corporate plans to construct on this work by releasing an even bigger mannequin able to higher reasoning and dealing in a number of languages, anticipated to debut someday in 2024.

For now, Mistral 7B could be deployed wherever (from regionally to AWS, GCP or Azure clouds) utilizing the corporate’s reference implementation and vLLM inference server and Skypilot