Within the realm of pc imaginative and prescient, face detection stands as a basic and fascinating job. Detecting and finding faces inside photographs or video streams varieties the cornerstone of quite a few functions, from facial recognition programs to digital picture processing. Among the many many algorithms developed to sort out this problem, the Viola-Jones algorithm has emerged as a groundbreaking strategy famend for its velocity and accuracy.

The Viola-Jones algorithm, pioneered by Paul Viola and Michael Jones in 2001, revolutionized the sphere of face detection. Its environment friendly and sturdy methodology opened doorways to a variety of functions that depend on precisely figuring out and analyzing human faces. By harnessing the ability of Haar-like options, integral photographs, machine studying, and cascades of classifiers, the Viola-Jones algorithm showcases the synergy between pc science and picture processing.

On this weblog, we are going to delve into the intricacies of the Viola-Jones algorithm, unraveling its underlying mechanisms and exploring its functions. From its coaching course of to its implementation in real-world eventualities, we are going to unlock the ability of face detection and witness firsthand the transformative capabilities of the Viola-Jones algorithm.

- What is face detection?

- What is Viola Jones algorithm?

- Using a Viola Jones Classifier to detect faces in a live webcam feed

What’s face detection?

Object detection is likely one of the pc applied sciences that’s related to picture processing and pc imaginative and prescient. It’s involved with detecting cases of an object similar to human faces, buildings, timber, automobiles, and so forth. The first purpose of face detection algorithms is to find out whether or not there may be any face in a picture or not.

Lately, we now have seen vital development of applied sciences that may detect and recognise faces. Our cellular cameras are sometimes outfitted with such expertise the place we will see a field across the faces. Though there are fairly superior face detection algorithms, particularly with the introduction of deep studying, the introduction of viola jones algorithm in 2001 was a breakthrough on this discipline. Now allow us to discover the viola jones algorithm intimately.

What’s Viola Jones algorithm?

Viola Jones algorithm is called after two pc imaginative and prescient researchers who proposed the strategy in 2001, Paul Viola and Michael Jones of their paper, “Fast Object Detection utilizing a Boosted Cascade of Easy Options”. Regardless of being an outdated framework, Viola-Jones is sort of highly effective, and its utility has confirmed to be exceptionally notable in real-time face detection. This algorithm is painfully sluggish to coach however can detect faces in real-time with spectacular velocity.

Given a picture(this algorithm works on grayscale picture), the algorithm seems to be at many smaller subregions and tries to discover a face by in search of particular options in every subregion. It must test many various positions and scales as a result of a picture can comprise many faces of varied sizes. Viola and Jones used Haar-like options to detect faces on this algorithm.

The Viola Jones algorithm has 4 principal steps, which we will talk about within the sections to comply with:

- Deciding on Haar-like options

- Creating an integral picture

- Working AdaBoost coaching

- Creating classifier cascades

What are Haar-Like Options?

Within the nineteenth century a Hungarian mathematician, Alfred Haar gave the ideas of Haar wavelets, that are a sequence of rescaled “square-shaped” capabilities which collectively kind a wavelet household or foundation. Voila and Jones tailored the concept of utilizing Haar wavelets and developed the so-called Haar-like options.

Haar-like options are digital picture options utilized in object recognition. All human faces share some common properties of the human face just like the eyes area is darker than its neighbour pixels, and the nostril area is brighter than the attention area.

A easy solution to discover out which area is lighter or darker is to sum up the pixel values of each areas and examine them. The sum of pixel values within the darker area can be smaller than the sum of pixels within the lighter area. If one aspect is lighter than the opposite, it could be an fringe of an eyebrow or generally the center portion could also be shinier than the encompassing packing containers, which may be interpreted as a nostril This may be completed utilizing Haar-like options and with the assistance of them, we will interpret the totally different components of a face.

There are 3 varieties of Haar-like options that Viola and Jones recognized of their analysis:

- Edge options

- Line-features

- 4-sided options

Edge options and Line options are helpful for detecting edges and contours respectively. The four-sided options are used for locating diagonal options.

The worth of the characteristic is calculated as a single quantity: the sum of pixel values within the black space minus the sum of pixel values within the white space. The worth is zero for a plain floor through which all of the pixels have the identical worth, and thus, present no helpful data.

Since our faces are of advanced shapes with darker and brighter spots, a Haar-like characteristic offers you a big quantity when the areas within the black and white rectangles are very totally different. Utilizing this worth, we get a bit of legitimate data out of the picture.

To be helpful, a Haar-like characteristic wants to present you a big quantity, that means that the areas within the black and white rectangles are very totally different. There are recognized options that carry out very effectively to detect human faces:

For instance, after we apply this particular haar-like characteristic to the bridge of the nostril, we get an excellent response. Equally, we mix many of those options to know if a picture area incorporates a human face.

What are Integral Pictures?

Within the earlier part, we now have seen that to calculate a price for every characteristic, we have to carry out computations on all of the pixels inside that exact characteristic. In actuality, these calculations may be very intensive because the variety of pixels could be a lot larger after we are coping with a big characteristic.

The integral picture performs its half in permitting us to carry out these intensive calculations shortly so we will perceive whether or not a characteristic of a number of options match the factors.

An integral picture (also referred to as a summed-area desk) is the identify of each an information construction and an algorithm used to acquire this knowledge construction. It’s used as a fast and environment friendly solution to calculate the sum of pixel values in a picture or rectangular a part of a picture.

How is AdaBoost utilized in viola jones algorithm?

Subsequent, we use a Machine Studying algorithm often called AdaBoost. However why can we even need an algorithm?

The variety of options which can be current within the 24×24 detector window is almost 160,000, however just a few of those options are necessary to determine a face. So we use the AdaBoost algorithm to determine one of the best options within the 160,000 options.

Within the Viola-Jones algorithm, every Haar-like characteristic represents a weak learner. To determine the kind and measurement of a characteristic that goes into the ultimate classifier, AdaBoost checks the efficiency of all classifiers that you simply provide to it.

To calculate the efficiency of a classifier, you consider it on all subregions of all the photographs used for coaching. Some subregions will produce a powerful response within the classifier. These can be categorised as positives, that means the classifier thinks it incorporates a human face. Subregions that don’t present a powerful response don’t comprise a human face, within the classifiers opinion. They are going to be categorised as negatives.

The classifiers that carried out effectively are given larger significance or weight. The ultimate result’s a powerful classifier, additionally referred to as a boosted classifier, that incorporates one of the best performing weak classifiers.

So after we’re coaching the AdaBoost to determine necessary options, we’re feeding it data within the type of coaching knowledge and subsequently coaching it to be taught from the data to foretell. So in the end, the algorithm is setting a minimal threshold to find out whether or not one thing may be categorised as a helpful characteristic or not.

What are Cascading Classifiers?

Possibly the AdaBoost will lastly choose one of the best options round say 2500, however it’s nonetheless a time-consuming course of to calculate these options for every area. We’ve a 24×24 window which we slide over the enter picture, and we have to discover if any of these areas comprise the face. The job of the cascade is to shortly discard non-faces, and keep away from wasting your time and computations. Thus, reaching the velocity crucial for real-time face detection.

We arrange a cascaded system through which we divide the method of figuring out a face into a number of levels. Within the first stage, we now have a classifier which is made up of our greatest options, in different phrases, within the first stage, the subregion passes by way of one of the best options such because the characteristic which identifies the nostril bridge or the one which identifies the eyes. Within the subsequent levels, we now have all of the remaining options.

When a picture subregion enters the cascade, it’s evaluated by the primary stage. If that stage evaluates the subregion as constructive, that means that it thinks it’s a face, the output of the stage is perhaps.

When a subregion will get a perhaps, it’s despatched to the subsequent stage of the cascade and the method continues as such until we attain the final stage.

If all classifiers approve the picture, it’s lastly categorised as a human face and is offered to the person as a detection.

Now how does it assist us to extend our velocity? Mainly, If the primary stage offers a destructive analysis, then the picture is instantly discarded as not containing a human face. If it passes the primary stage however fails the second stage, it’s discarded as effectively. Mainly, the picture can get discarded at any stage of the classifier

Utilizing a Viola-Jones Classifier to detect faces in a stay webcam feed

On this part, we’re going to implement the Viola-Jones algorithm utilizing OpenCV and detect faces in our webcam feed in real-time. We can even use the identical algorithm to detect the eyes of an individual too. That is fairly easy and all you want is to put in OpenCV and Python in your PC. You’ll be able to confer with this text to find out about OpenCV and find out how to set up it

In OpenCV, we now have a number of educated Haar Cascade fashions that are saved as XML information. As a substitute of making and coaching the mannequin from scratch, we use this file. We’re going to use “haarcascade_frontalface_alt2.xml” file on this venture. Now allow us to begin coding.

Step one is to search out the trail to the “haarcascade_frontalface_alt2.xml” and “haarcascade_eye_tree_eyeglasses.xml” information. We do that by utilizing the os module of Python language.

import os

cascPathface = os.path.dirname(

cv2.__file__) + "/knowledge/haarcascade_frontalface_alt2.xml"

cascPatheyes = os.path.dirname(

cv2.__file__) + "/knowledge/haarcascade_eye_tree_eyeglasses.xml"The following step is to load our classifier. We’re utilizing two classifiers, one for detecting the face and others for detection eyes. The trail to the above XML file goes as an argument to CascadeClassifier() technique of OpenCV.

faceCascade = cv2.CascadeClassifier(cascPath)

eyeCascade = cv2.CascadeClassifier(cascPatheyes)

After loading the classifier, allow us to open the webcam utilizing this easy OpenCV one-liner code

video_capture = cv2.VideoCapture(0)Subsequent, we have to get the frames from the webcam stream, we do that utilizing the learn() perform. We use the infinite loop to get all of the frames till the time we wish to shut the stream.

whereas True:

# Seize frame-by-frame

ret, body = video_capture.learn()The learn() perform returns:

- The precise video body learn (one body on every loop)

- A return code

The return code tells us if we now have run out of frames, which is able to occur if we’re studying from a file. This doesn’t matter when studying from the webcam since we will report endlessly, so we are going to ignore it.

For this particular classifier to work, we have to convert the body into greyscale.

grey = cv2.cvtColor(body, cv2.COLOR_BGR2GRAY)The faceCascade object has a technique detectMultiScale(), which receives a body(picture) as an argument and runs the classifier cascade over the picture. The time period MultiScale signifies that the algorithm seems to be at subregions of the picture in a number of scales, to detect faces of various sizes.

faces = faceCascade.detectMultiScale(grey,

scaleFactor=1.1,

minNeighbors=5,

minSize=(60, 60),

flags=cv2.CASCADE_SCALE_IMAGE)Allow us to undergo these arguments of this perform:

- scaleFactor – Parameter specifying how a lot the picture measurement is diminished at every picture scale. By rescaling the enter picture, you’ll be able to resize a bigger face to a smaller one, making it detectable by the algorithm. 1.05 is an efficient potential worth for this, which suggests you employ a small step for resizing, i.e. scale back the scale by 5%, you improve the prospect of an identical measurement with the mannequin for detection is discovered.

- minNeighbors – Parameter specifying what number of neighbours every candidate rectangle ought to must retain it. This parameter will have an effect on the standard of the detected faces. Larger worth leads to fewer detections however with larger high quality. 3~6 is an efficient worth for it.

- flags –Mode of operation

- minSize – Minimal potential object measurement. Objects smaller than which can be ignored.

The variable faces now comprise all of the detections for the goal picture. Detections are saved as pixel coordinates. Every detection is outlined by its top-left nook coordinates and width and peak of the rectangle that encompasses the detected face.

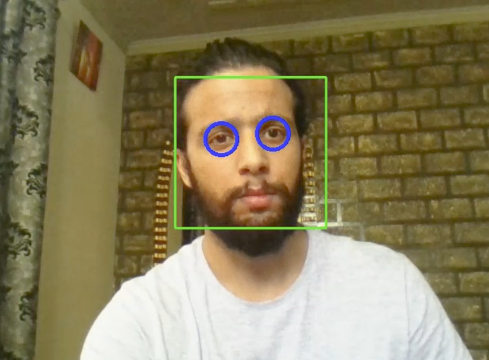

To point out the detected face, we are going to draw a rectangle over it.OpenCV’s rectangle() attracts rectangles over photographs, and it must know the pixel coordinates of the top-left and bottom-right nook. The coordinates point out the row and column of pixels within the picture. We are able to simply get these coordinates from the variable face.

Additionally as now, we all know the situation of the face, we outline a brand new space which simply incorporates the face of an individual and identify it as faceROI.In faceROI we detect the eyes and encircle them utilizing the circle perform.

for (x,y,w,h) in faces:

cv2.rectangle(body, (x, y), (x + w, y + h),(0,255,0), 2)

faceROI = body[y:y+h,x:x+w]

eyes = eyeCascade.detectMultiScale(faceROI)

for (x2, y2, w2, h2) in eyes:

eye_center = (x + x2 + w2 // 2, y + y2 + h2 // 2)

radius = int(spherical((w2 + h2) * 0.25))

body = cv2.circle(body, eye_center, radius, (255, 0, 0), 4)The perform rectangle() accepts the next arguments:

- The unique picture

- The coordinates of the top-left level of the detection

- The coordinates of the bottom-right level of the detection

- The color of the rectangle (a tuple that defines the quantity of crimson, inexperienced, and blue (0-255)).In our case, we set as inexperienced simply protecting the inexperienced element as 255 and relaxation as zero.

- The thickness of the rectangle strains

Subsequent, we simply show the ensuing body and likewise set a solution to exit this infinite loop and shut the video feed. By urgent the ‘q’ key, we will exit the script right here

cv2.imshow('Video', body)

if cv2.waitKey(1) & 0xFF == ord('q'):

breakThe following two strains are simply to scrub up and launch the image.

video_capture.launch()

cv2.destroyAllWindows()Listed below are the total code and output.

import cv2

import os

cascPathface = os.path.dirname(

cv2.__file__) + "/knowledge/haarcascade_frontalface_alt2.xml"

cascPatheyes = os.path.dirname(

cv2.__file__) + "/knowledge/haarcascade_eye_tree_eyeglasses.xml"

faceCascade = cv2.CascadeClassifier(cascPathface)

eyeCascade = cv2.CascadeClassifier(cascPatheyes)

video_capture = cv2.VideoCapture(0)

whereas True:

# Seize frame-by-frame

ret, body = video_capture.learn()

grey = cv2.cvtColor(body, cv2.COLOR_BGR2GRAY)

faces = faceCascade.detectMultiScale(grey,

scaleFactor=1.1,

minNeighbors=5,

minSize=(60, 60),

flags=cv2.CASCADE_SCALE_IMAGE)

for (x,y,w,h) in faces:

cv2.rectangle(body, (x, y), (x + w, y + h),(0,255,0), 2)

faceROI = body[y:y+h,x:x+w]

eyes = eyeCascade.detectMultiScale(faceROI)

for (x2, y2, w2, h2) in eyes:

eye_center = (x + x2 + w2 // 2, y + y2 + h2 // 2)

radius = int(spherical((w2 + h2) * 0.25))

body = cv2.circle(body, eye_center, radius, (255, 0, 0), 4)

# Show the ensuing body

cv2.imshow('Video', body)

if cv2.waitKey(1) & 0xFF == ord('q'):

break

video_capture.launch()

cv2.destroyAllWindows()Output:

This brings us to the tip of this text the place we realized concerning the Viola Jones algorithm and its implementation in OpenCV.