Massive Motion Fashions (LAMs) are deep studying fashions that intention to know directions and execute complicated duties and actions accordingly. LAMs additionally mix language understanding with reasoning and software program brokers.

Though nonetheless below analysis and growth, these fashions is usually a transformative pressure within the Synthetic Intelligence (AI) world. LAMs characterize a big leap past textual content technology and understanding. They’ve the potential to revolutionize how we work and automate duties throughout many industries.

We’ll discover how Massive Motion Fashions work, their numerous capabilities in real-world functions, and uncover some open-source fashions. Prepare for a journey in Massive Motion Fashions, the place AI isn’t just speaking, however taking motion.

What Are Massive Motion Fashions and How Do They Work?

Massive Motion Fashions (LAMs) are AI software program designed to take motion in a hierarchical strategy the place duties are damaged down into smaller subtasks. Actions are carried out from user-given directions utilizing brokers.

Not like massive language fashions, a Massive Motion Mannequin combines language understanding with logic and reasoning to execute varied duties. This strategy can usually study from suggestions and interactions, though to not be confused with reinforcement studying.

Neuro-symbolic programming has been an essential approach in growing extra succesful Massive Motion Fashions. This system combines studying capabilities and logical reasoning from neural networks and symbolic AI. By combining the perfect of each worlds, LAMs can perceive language, motive about potential actions, and execute primarily based on instruction.

The structure of a Massive Motion Mannequin can range relying on the big selection of duties it might probably carry out. Nevertheless, understanding the variations between LAMs and LLMs is crucial earlier than diving into their elements.

LLMs VS. LAMs

| Characteristic | Massive Language Fashions (LLMs) | Massive Motion Fashions (LAMs) |

|---|---|---|

| What can it do | Language Technology | Job Execution and Completion |

| Enter | Textual knowledge | Textual content, photos, instruction, and many others. |

| Output | Textual knowledge | Actions, Textual content |

| Coaching Knowledge | Massive textual content company | Textual content, code, photos, actions |

| Software Areas | Content material creation, translation, chatbots | Automation, decision-making, complicated interactions |

| Strengths | Language understanding, textual content technology | Reasoning, planning, decision-making, real-time interplay |

| Weaknesses | Restricted reasoning, lack of motion capabilities | Nonetheless below growth, moral considerations |

Now we will delve deeper into the precise elements of a big motion mannequin. These elements normally are:

- Sample-Recognition: Neural networks

- Symbolic AI: Logical Reasoning

- Motion Mannequin: Execute Duties (Brokers)

Neuro-Symbolic Programming

Neuro-symbolic AI combines neural networks’ capability to study patterns with symbolic AI reasoning strategies, creating a robust synergy that addresses the restrictions of every strategy.

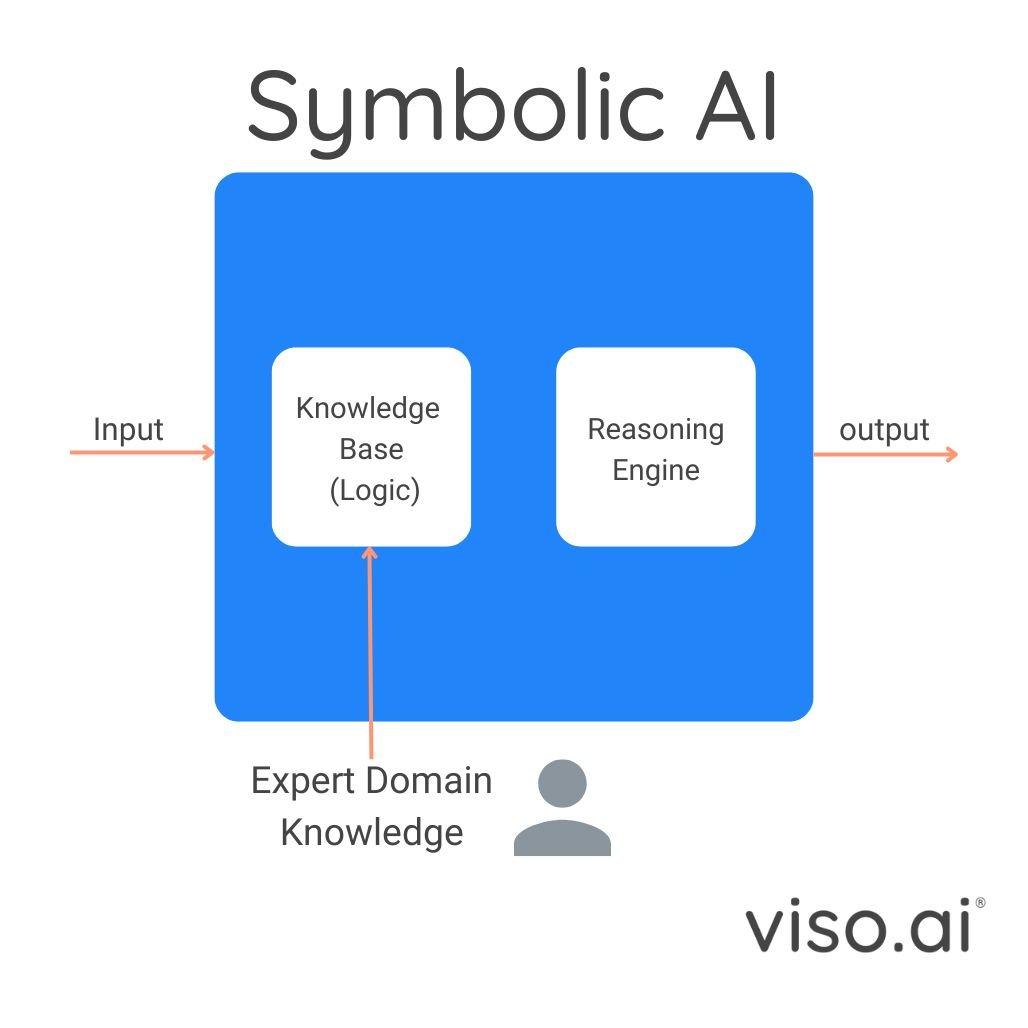

Symbolic AI, usually primarily based on logic programming (mainly a bunch of if-then statements) excels at reasoning and offering explanations for its choices. It makes use of formal languages, like first-order logic, to characterize data and an inference engine to attract logical conclusions primarily based on person queries.

This capability to hint outputs to the principles and data inside the program makes the symbolic AI mannequin extremely interpretable and explainable. Moreover, it permits us to develop the system’s data as new data turns into obtainable. However this strategy alone has its limitations:

- New guidelines don’t undo outdated data

- Symbols should not linked to representations or uncooked knowledge.

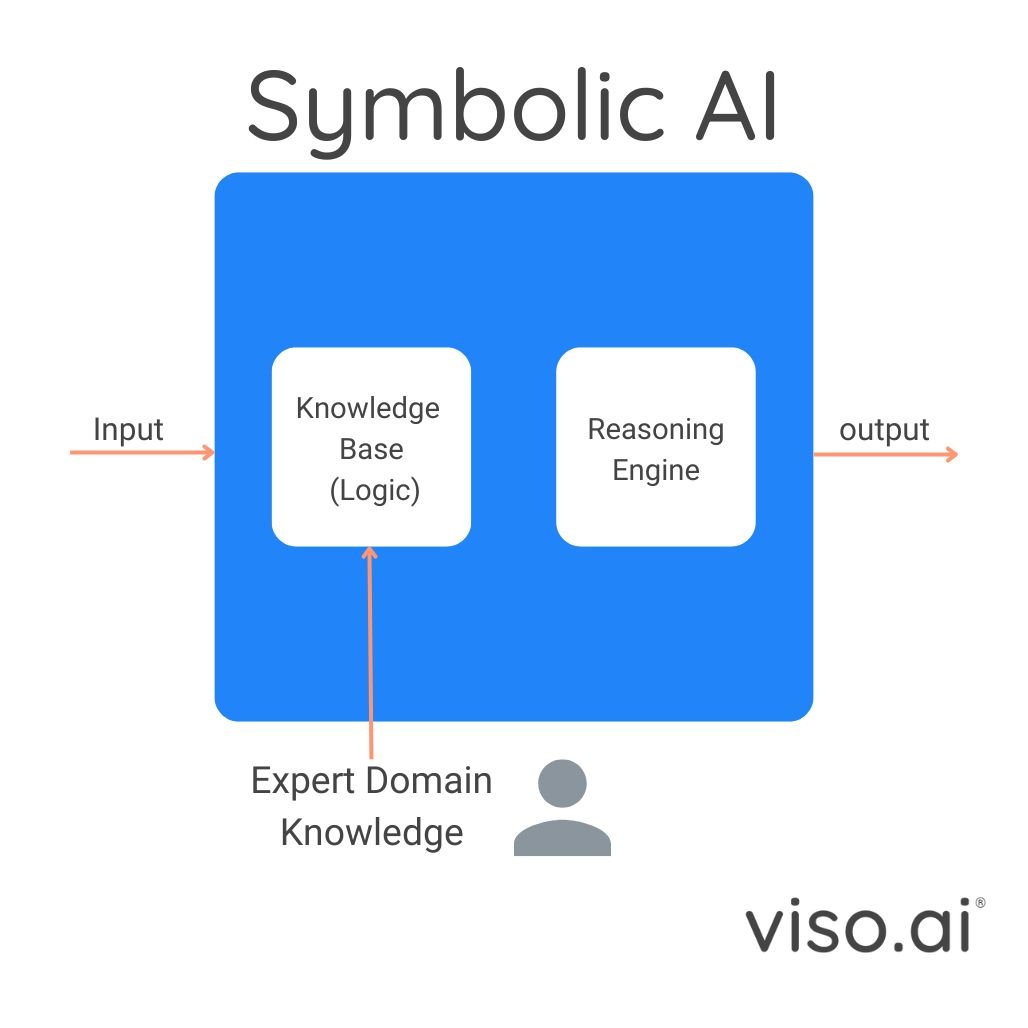

In distinction, the neural facet of neuro-symbolic programming includes deep neural networks like LLMs and imaginative and prescient fashions, which thrive on studying from large datasets and excel at recognizing patterns inside them.

This sample recognition functionality permits neural networks to carry out duties like picture classification, object detection, and predicting the subsequent phrase in NLP. Nevertheless, they lack the express reasoning, logic, and explainability that symbolic AI gives.

Neuro-symbolic AI goals to merge these two strategies, giving us applied sciences like Massive Motion Fashions (LAMs). These programs can mix the highly effective pattern-recognition talents of neural networks with the symbolic AI reasoning capabilities, enabling them to motive about summary ideas and generate explainable outcomes.

Neuro-symbolic AI approaches will be broadly categorized into two important sorts:

- Compressing structured symbolic data right into a format that may be built-in with neural community patterns. This enables the mannequin to motive utilizing the mixed data.

- Extracting data from the patterns realized by neural networks. This extracted data is then mapped to structured symbolic data (a course of known as lifting) and used for symbolic reasoning.

Motion Engine

In Massive Motion Fashions (LAMs), neuro-symbolic programming empowers neural fashions like LLMs with reasoning and planning talents from symbolic AI strategies.

The core idea of AI brokers is used to execute the generated plans and presumably adapt to new challenges. Open-source LAMs usually combine logic programming with imaginative and prescient and language fashions, connecting the software program to instruments and APIs of helpful apps and companies to carry out duties.

Let’s see how these AI brokers work.

An AI agent is software program that may perceive its setting and take motion. Actions rely on the present state of the setting and the given situations or data. Moreover, some AI brokers may adapt to adjustments and study primarily based on interactions.

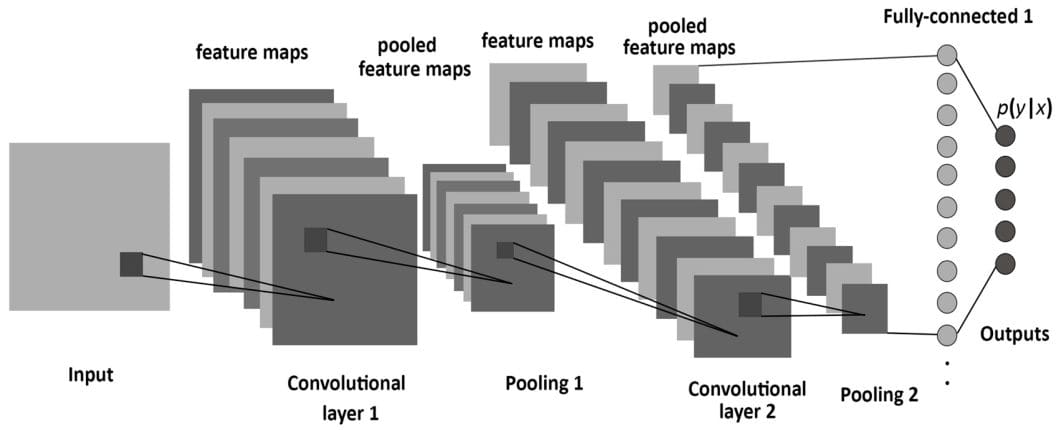

Utilizing the visualization above, let’s put the best way a Massive Motion Mannequin makes our requests into motion:

- Notion: A LAM receives enter as voice, textual content, or visuals, accompanied by a activity request.

- Mind: This might be the neuro-symbolic AI of the Massive Motion Mannequin, which incorporates capabilities to plan and motive in addition to memorize and study or retrieve data.

- Agent: That is how the massive motion mannequin takes motion, as a person interface or a tool. It analyzes the given enter activity utilizing the mind after which takes motion primarily based on that.

- Motion: That is the place the doing begins. The mannequin outputs a mix of textual content, visuals, and actions. For instance, the mannequin may reply to a question utilizing an LLM functionality to generate textual content and take motion primarily based on the reasoning capabilities of symbolic AI. The motion includes breaking down the duty into subtasks, performing every subtask utilizing options like calling APIs or leveraging apps, instruments, and companies via the agent software program program.

What Can Massive Motion Fashions Do?

Massive Motion Fashions (LAMs) can nearly do any activity they’re skilled to do. By understanding human intention and responding to complicated directions, LAMs can automate easy or complicated duties, and make choices primarily based on textual content and visible enter. Crucially, LAMs usually can incorporate explainability permitting us to hint their reasoning course of.

Rabbit R1 is without doubt one of the hottest massive motion fashions and a terrific instance to showcase the facility of those fashions. Rabbit R1 combines:

- Imaginative and prescient duties

- Net portal for connecting companies and functions and including new duties with train mode.

- Train mode permits customers to instruct and information the mannequin by doing the duty themselves.

Whereas the time period massive motion fashions already existed and was an ongoing space of analysis and growth, Rabbit R1 and its OS popularized it. Open-source options existed, usually incorporating related rules of logic programming and imaginative and prescient/language fashions to work together with APIs and carry out actions primarily based on person requests.

Open-Supply Fashions

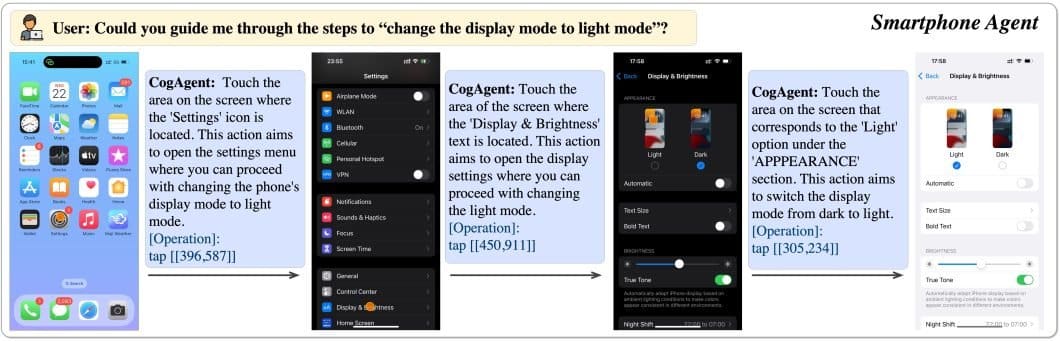

1. CogAgent

CogAgent is an open-source Motion Mannequin, primarily based on CogVLM, an open-source imaginative and prescient language mannequin. It capabilities as a visible agent able to producing plans, figuring out the subsequent motion, and offering exact coordinates for particular operations inside any given GUI screenshot.

This mannequin can even do visible query answering (VQA) on any GUI screenshot, and OCR-related duties.

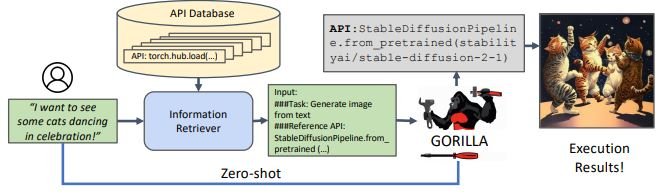

2. Gorilla

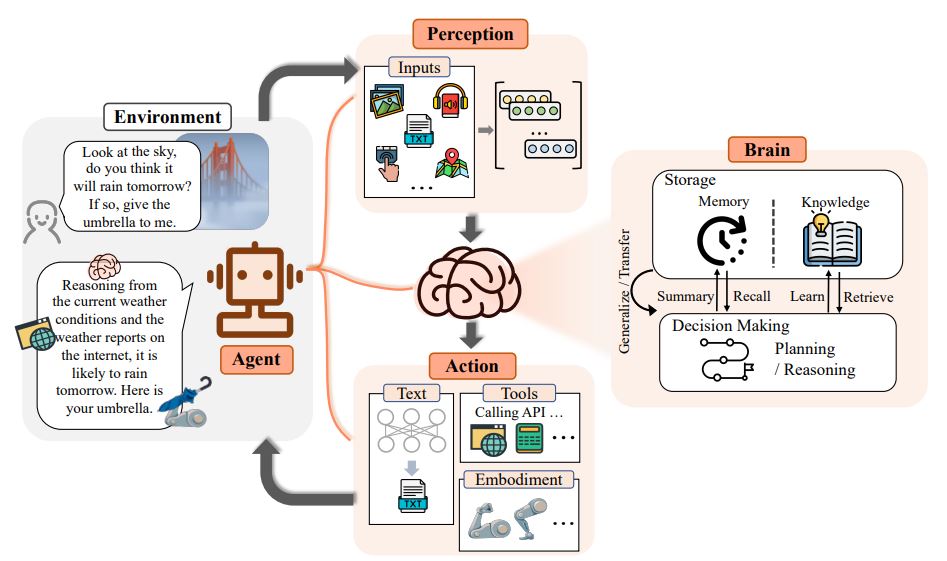

Gorilla is one spectacular open-source massive motion mannequin because it empowers LLMs to make the most of 1000’s of instruments via exact API calls. It precisely identifies and executes the suitable API name, by understanding the wanted motion from pure language queries. This strategy has efficiently invoked over 1,600 (and rising) APIs with distinctive accuracy whereas minimizing hallucination.

Gorilla makes use of its proprietary execution engine, GoEx, as a runtime setting for executing LLM-generated plans, together with code execution and API calls.

The visualization above exhibits a transparent instance of huge motion fashions in work. Right here, the person desires to see a particular picture, and the mannequin retrieves the wanted motion from the data database and executes the wanted code via an API name, all in a zero-shot method.

Actual-World Purposes of Massive Motion Fashions

The facility of Massive Motion Fashions (LAMs) is reaching into many industries, reworking how we work together with know-how and automate complicated duties. LAMs are proving their price as a complete software.

Let’s delve into some examples the place massive motion fashions will be utilized.

- Robotics: Massive Motion Fashions might be helpful to create extra clever and autonomous robots able to understanding and responding. This enhances human-robot interplay and opens new avenues for automation in manufacturing, healthcare, and even area exploration.

- Buyer Service and Help: Think about a customer support AI agent who understands a buyer’s downside and might take quick motion to resolve it. LAMs could make this a actuality, by streamlining processes like ticket decision, refunds, and account updates.

- Finance: Within the monetary sector, LAMs can analyze complicated knowledge primarily based on knowledgable enter, and supply personalised suggestions and automation for investments and monetary planning.

- Training: Massive Motion Fashions may remodel the tutorial sector by providing personalised studying experiences relying on every scholar’s wants. They will present instantaneous suggestions, assess assignments, and generate adaptive instructional content material.

These examples spotlight just some methods LAMs can revolutionize industries and improve our interplay with know-how. Analysis and growth in Massive Motion Fashions are nonetheless within the early phases, and we will anticipate them to unlock additional prospects.

What’s Subsequent For Massive Motion Fashions?

Massive Motion Fashions (LAMs) may probably redefine how we work together with know-how and automate duties throughout varied domains. Their distinctive capability to know directions, motive with logic, make choices, and execute actions, all this has immense potential. From enhancing customer support to revolutionizing robotics and schooling, LAMs supply a glimpse right into a future the place AI-powered brokers seamlessly combine into our lives.

As analysis progresses, we will anticipate LAMs turning into extra subtle, able to dealing with even excessive degree complicated duties and understanding domain-specific directions. Nevertheless, as with every energy comes accountability. Making certain the security, equity, and moral use of LAMs is essential.

Addressing challenges like bias in coaching knowledge and potential misuse will probably be very important as we proceed to develop and deploy these highly effective fashions. The way forward for LAMs is vivid. As they evolve, these fashions could have a job in shaping a extra environment friendly, productive, and human-centered technological panorama.