Are you able to deliver extra consciousness to your model? Think about turning into a sponsor for The AI Influence Tour. Study extra in regards to the alternatives here.

Even because the world bears witness to the ability battle and mass resignation at OpenAI, Microsoft, the long-time backer of the AI main, is just not slowing down its personal AI efforts. At present, the analysis arm of the Satya Nadella-led firm dropped Orca 2, a pair of small language fashions that both match or outperform 5 to 10 occasions bigger language fashions, together with Meta’s Llama-2 Chat-70B, when examined on advanced reasoning duties in zero-shot settings.

The fashions are available in two sizes, 7 billion and 13 billion parameters, and construct on the work performed on the unique 13B Orca mannequin that demonstrated robust reasoning skills by imitating step-by-step reasoning traces of larger, extra succesful fashions just a few months in the past.

“With Orca 2, we proceed to indicate that improved coaching indicators and strategies can empower smaller language fashions to attain enhanced reasoning skills, that are sometimes discovered solely in a lot bigger language fashions,” Microsoft researchers wrote in a joint weblog post.

The corporate has open-sourced each new fashions for additional analysis on the event and analysis of smaller fashions that may carry out simply in addition to greater ones. This work can provide enterprises, significantly these with restricted assets, a greater choice to get to deal with their focused use instances with out investing an excessive amount of in computing capability.

Instructing small fashions easy methods to motive

Whereas massive language fashions reminiscent of GPT-4 have lengthy impressed enterprises and people with their skill to motive and reply advanced questions with explanations, their smaller counterparts have largely missed that skill. Microsoft Analysis determined to sort out this hole by fine-tuning Llama 2 base fashions on a highly-tailored artificial dataset.

Nonetheless, as an alternative of coaching the small fashions to copy the habits of extra succesful fashions – a generally used method often called imitation studying, the researchers skilled the fashions to make use of completely different answer methods for various duties at hand. The concept was {that a} bigger mannequin’s technique might not work completely for a smaller one on a regular basis. For instance, GPT-4 might be able to reply advanced questions instantly however a smaller mannequin, with out that sort of capability, may profit by breaking the identical process into just a few steps.

“In Orca 2, we train the mannequin numerous reasoning methods (step-by-step, recall then generate, recall-reason-generate, direct reply, and so on.). Extra crucially, we goal to assist the mannequin be taught to find out the best answer technique for every process,” the researchers wrote in a paper printed at present. The coaching knowledge for the challenge was obtained from a extra succesful instructor mannequin in such a approach that it teaches the scholar mannequin to deal with each points: easy methods to use a reasoning technique and when precisely to make use of it for a given process at hand.

Orca 2 performs higher than bigger fashions

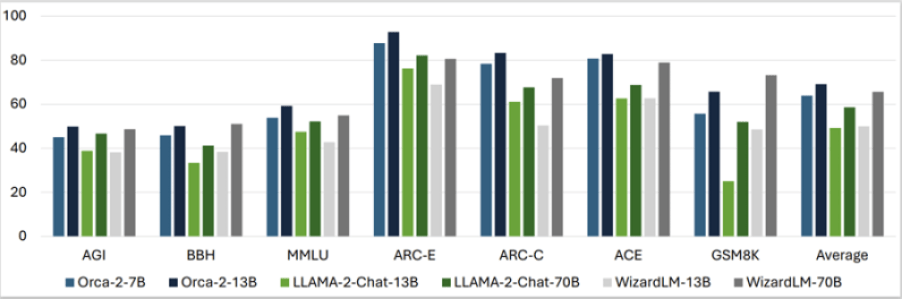

When examined on 15 various benchmarks (in zero-shot settings) masking points like language understanding, common sense reasoning, multi-step reasoning, math downside fixing, studying comprehension, summarizing and truthfulness, the Orca 2 fashions produced astounding outcomes by largely matching or outperforming fashions which can be 5 to 10 occasions greater in dimension.

The common of all of the benchmark outcomes confirmed that Orca 2 7B and 13B outperformed Llama-2-Chat-13B and 70B and WizardLM-13B and 70B. Solely within the GSM8K benchmark, which consists of 8.5K high-quality grade college math issues, WizardLM-70B did convincingly higher than the Orca fashions and Llama fashions.

Whereas the efficiency is nice information for enterprise groups which will need a small, high-performing mannequin for cost-effective enterprise functions, you will need to word that these fashions may inherit limitations widespread to different language fashions in addition to these of the bottom mannequin they have been fine-tuned upon.

Microsoft added that the method used to create the Orca fashions may even be used on different base fashions on the market.

“Whereas it has a number of limitations…, Orca 2’s potential for future developments is obvious, particularly in improved reasoning, specialization, management, and security of smaller fashions. Using rigorously filtered artificial knowledge for post-training emerges as a key technique in these enhancements. As bigger fashions proceed to excel, our work with Orca 2 marks a major step in diversifying the functions and deployment choices of language fashions,” the analysis crew wrote.

Extra small, high-performing fashions to crop up

With the discharge of open-source Orca 2 fashions and the continued analysis within the area, it’s protected to say that extra high-performing small language fashions are prone to crop up within the close to future.

Only a few weeks again, China’s lately turned unicorn 01.AI, based by veteran AI professional Kai-Fu Lee, additionally took a serious step on this space with the discharge of a 34-billion parameter mannequin that helps Chinese language and English and outperforms the 70-billion Llama 2 and 180-billion Falcon counterparts. The startup additionally presents a smaller choice that has been skilled with 6 billion parameters and performs respectably on extensively used AI/ML mannequin benchmarks.

Mistral AI, the six-month-old Paris-based startup that made headlines with its distinctive Phrase Artwork emblem and a record-setting $118 million seed spherical — additionally presents a 7 billion parameter mannequin that outperforms greater choices, together with Meta’s Llama 2 13B (one of many smaller of Meta’s newer fashions).