Join with high gaming leaders in Los Angeles at GamesBeat Summit 2023 this Might 22-23. Register here.

Nvidia introduced that the Nvidia GH200 Grace Hopper Superchip is in full manufacturing, set to energy programs that run complicated AI packages.

Additionally focused and high-performance computing (HPC) workloads, the GH200-powered programs be a part of greater than 400 system configurations based mostly on Nvidia’s newest CPU and GPU architectures — together with Nvidia Grace, Nvidia Hopper and Nvidia Ada Lovelace — created to assist meet the surging demand for generative AI.

On the Computex commerce present in Taiwan, Nvidia CEO Jensen Huang revealed new programs, companions and extra particulars surrounding the GH200 Grace Hopper Superchip, which brings collectively the Arm-based Nvidia Grace CPU and Hopper GPU architectures utilizing Nvidia NVLink-C2C interconnect know-how.

This delivers as much as 900GB/s whole bandwidth — or seven instances increased bandwidth than the usual PCIe Gen5 lanes present in conventional accelerated programs, offering unimaginable compute functionality to deal with probably the most demanding generative AI and HPC functions.

“Generative AI is quickly reworking companies, unlocking new alternatives and accelerating discovery in healthcare, finance, enterprise companies and plenty of extra industries,” mentioned Ian Buck, vice chairman of accelerated computing at Nvidia, in a press release. “With Grace Hopper Superchips in full manufacturing, producers worldwide will quickly present the accelerated infrastructure enterprises must construct and deploy generative AI functions that leverage their distinctive proprietary knowledge.”

World hyperscalers and supercomputing facilities in Europe and the U.S. are amongst a number of prospects that can have entry to GH200-powered programs.

“We’re all experiencing the enjoyment of what large AI fashions can do,” Buck mentioned in a press briefing.

A whole lot of accelerated programs and cloud situations

Taiwan producers are among the many many system producers worldwide introducing programs powered by the most recent Nvidia know-how, together with Aaeon, Advantech, Aetina, ASRock Rack, Asus, Gigabyte, Ingrasys, Inventec, Pegatron, QCT, Tyan, Wistron and Wiwynn.

Moreover, international server producers Cisco, Dell Applied sciences, Hewlett Packard Enterprise, Lenovo, Supermicro, and Eviden, an Atos firm, provide a broad array of Nvidia-accelerated programs.

Cloud companions for Nvidia H100 embody Amazon Internet Providers (AWS), Cirrascale, CoreWeave, Google Cloud, Lambda, Microsoft Azure, Oracle Cloud Infrastructure, Paperspace and Vultr.

Nvidia AI Enterprise, the software program layer of the Nvidia AI platform, presents over 100 frameworks, pretrained fashions and growth instruments to streamline growth and deployment of manufacturing AI, together with generative AI, pc imaginative and prescient and speech AI.

Methods with GH200 Superchips are anticipated to be accessible starting later this yr.

Nvidia unveils MGX server specification

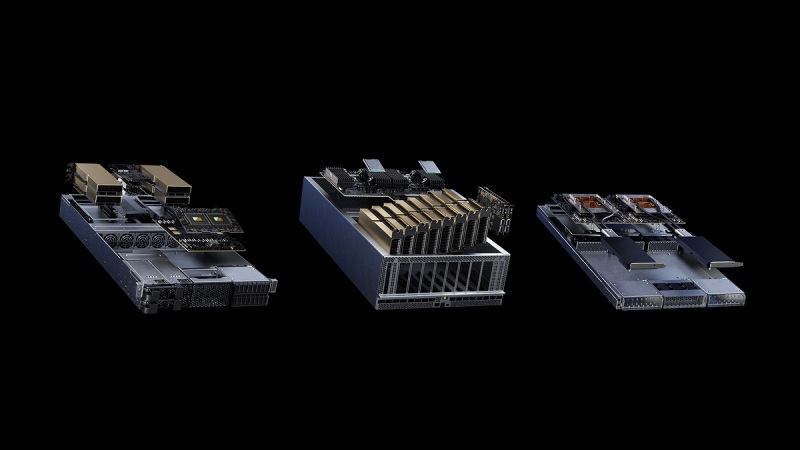

To satisfy the varied accelerated computing wants of knowledge facilities, Nvidia as we speak unveiled the Nvidia

MGX server specification, which offers system producers with a modular reference structure to rapidly and cost-effectively construct greater than 100 server variations to go well with a variety of AI, excessive efficiency computing and Omniverse functions.

ASRock Rack, ASUS, GIGABYTE, Pegatron, QCT and Supermicro will undertake MGX, which might slash growth prices by as much as three-quarters and cut back growth time by two-thirds to simply six months.

“Enterprises are searching for extra accelerated computing choices when architecting knowledge facilities that meet their particular enterprise and utility wants,” mentioned Kaustubh Sanghani, vice chairman of GPU merchandise at Nvidia, in a press release. “We created MGX to assist organizations bootstrap enterprise AI, whereas saving them important quantities of money and time.”

With MGX, producers begin with a primary system structure optimized for accelerated computing for his or her server chassis, after which choose their GPU, DPU and CPU. Design variations can handle distinctive workloads, resembling HPC, knowledge science, giant language fashions, edge computing, graphics and video, enterprise AI, and design and simulation.

A number of duties like AI coaching and 5G could be dealt with on a single machine, whereas upgrades to future {hardware} generations could be frictionless. MGX can be simply built-in into cloud and enterprise knowledge facilities, Nvidia mentioned.

QCT and Supermicro would be the first to market, with MGX designs showing in August. Supermicro’s ARS-221GL-NR system, introduced as we speak, will embody the Nvidia GraceTM CPU Superchip, whereas QCT’s S74G-2U system, additionally introduced as we speak, will use the Nvidia GH200 Grace Hopper Superchip.

Moreover, SoftBank plans to roll out a number of hyperscale knowledge facilities throughout Japan and use MGX to dynamically allocate GPU assets between generative AI and 5G functions.

“As generative AI permeates throughout enterprise and client life, constructing the fitting infrastructure for the fitting price is certainly one of community operators’ biggest challenges,” mentioned Junichi Miyakawa, CEO at SoftBank, in a press release. “We anticipate that Nvidia MGX can sort out such challenges and permit for multi-use AI, 5G

and extra relying on real-time workload necessities.”

MGX differs from Nvidia HGX in that it presents versatile, multi-generational compatibility with Nvidia merchandise to make sure that system builders can reuse current designs and simply undertake next-generation merchandise with out costly redesigns. In distinction, HGX is predicated on an NVLink- related multi-GPU

baseboard tailor-made to scale to create the last word in AI and HPC programs.

Nvidia declares DGX GH200 AI Supercomputer

Nvidia additionally introduced a brand new class of large-memory AI supercomputer — an Nvidia DGX supercomputer powered by Nvidia GH200 Grace Hopper Superchips and the Nvidia NVLink Change System — created to allow the event of large, next-generation fashions for generative AI language functions, recommender programs and knowledge analytics workloads.

The Nvidia DGX GH200’s shared reminiscence area makes use of NVLink interconnect know-how with the NVLink Change System to mix 256 GH200 Superchips, permitting them to carry out as a single GPU. This offers 1 exaflop of efficiency and 144 terabytes of shared reminiscence — practically 500x extra reminiscence than in a single Nvidia DGX A100 system.

“Generative AI, giant language fashions and recommender programs are the digital engines of the fashionable financial system,” mentioned Huang. “DGX GH200 AI supercomputers combine Nvidia’s most superior accelerated

computing and networking applied sciences to increase the frontier of AI.”

GH200 superchips remove the necessity for a standard CPU-to-GPU PCIe connection by combining an Arm-based Nvidia Grace CPU with an Nvidia H100 Tensor Core GPU in the identical bundle, utilizing Nvidia NVLink-C2C chip interconnects. This will increase the bandwidth between GPU and CPU by 7x in contrast with the most recent PCIe know-how, slashes interconnect energy consumption by greater than 5x, and offers a 600GB Hopper structure GPU constructing block for DGX GH200 supercomputers.

DGX GH200 is the primary supercomputer to pair Grace Hopper Superchips with the Nvidia NVLink Change System, a brand new interconnect that allows all GPUs in a DGX GH200 system to work collectively as one. The earlier technology system solely offered for eight GPUs to be mixed with NVLink as one GPU with out compromising efficiency.

The DGX GH200 structure offers 10 instances extra bandwidth than the earlier technology, delivering the ability of a large AI supercomputer with the simplicity of programming a single GPU.

Google Cloud, Meta and Microsoft are among the many first anticipated to achieve entry to the DGX GH200 to discover its capabilities for generative AI workloads. Nvidia additionally intends to offer the DGX GH200 design as a blueprint to cloud service suppliers and different hyperscalers to allow them to additional customise it for his or her infrastructure.

“Constructing superior generative fashions requires progressive approaches to AI infrastructure,” mentioned Mark Lohmeyer, vice chairman of Compute at Google Cloud, in a press release. “The brand new NVLink scale and shared reminiscence of Grace Hopper Superchips handle key bottlenecks in large-scale AI and we look ahead to exploring its capabilities for Google Cloud and our generative AI initiatives.”

Nvidia DGX GH200 supercomputers are anticipated to be accessible by the top of the yr.

Lastly, Huang introduced {that a} new supercomputer referred to as Nvidia Taipei-1 will convey extra accelerated computing assets to Asia to advance the event of AI and industrial metaverse functions.

Taipei-1 will increase the attain of the Nvidia DGX Cloud AI supercomputing service into the area with 64

DGX H100 AI supercomputers. The system will even embody 64 Nvidia OVX programs to speed up native

analysis and growth, and Nvidia networking to energy environment friendly accelerated computing at any scale.

Owned and operated by Nvidia, the system is anticipated to come back on-line later this yr.

Main Taiwan schooling and analysis institutes might be among the many first to entry Taipei-1 to advance

healthcare, giant language fashions, local weather science, robotics, good manufacturing and industrial digital

twins. Nationwide Taiwan College plans to review giant language mannequin speech studying as its preliminary Taipei-1 mission.

“Nationwide Taiwan College researchers are devoted to advancing science throughout a broad vary of

disciplines, a dedication that more and more requires accelerated computing,” mentioned Shao-Hua Solar, assistant

professor, Electrical Engineering Division at Nationwide Taiwan College, in a press release. “The Nvidia Taipei-1 supercomputer will assist our researchers, school and college students leverage AI and digital twins to deal with complicated challenges throughout many industries.”