Up to now few years, Synthetic Intelligence (AI) and Machine Studying (ML) have witnessed a meteoric rise in recognition and functions, not solely within the business but in addition in academia. Nonetheless, at this time’s ML and AI fashions have one main limitation: they require an immense quantity of computing and processing energy to attain the specified outcomes and accuracy. This typically confines their use to high-capability units with substantial computing energy.

However given the developments made in embedded system know-how, and substantial growth within the Web of Issues business, it’s fascinating to include using ML methods & ideas right into a resource-constrained embedded system for ubiquitous intelligence. The need to make use of ML ideas into embedded & IoT techniques is the first motivating issue behind the event of TinyML, an embedded ML method that enables ML fashions & functions on a number of resource-constrained, power-constrained, and low cost units.

Nonetheless, the implementation of ML on resource-constrained units has not been easy as a result of implementing ML fashions on units with low computing energy presents its personal challenges when it comes to optimization, processing capability, reliability, upkeep of fashions, and much more.

On this article, we shall be taking a deeper dive into the TinyML mannequin, and be taught extra about its background, the instruments supporting TinyML, and the functions of TinyML utilizing superior applied sciences. So let’s begin.

An Introduction to TinyML : Why the World Wants TinyML

Web of Issues or IoT units goal to leverage edge computing, a computing paradigm that refers to a spread of units & networks close to the consumer to allow seamless and real-time processing of information from thousands and thousands of sensors & units interconnected to 1 one other. One of many main benefits of IoT units is that they require low computing & processing energy as they’re deployable on the community edge, and therefore they’ve a low reminiscence footprint.

Moreover, the IoT units closely depend on edge platforms to gather & then transmit the info as these edge units collect sensory information, after which transmits them both to a close-by location, or cloud platforms for processing. The sting computing know-how shops & performs computing on the info, and likewise offers the mandatory infrastructure to assist the distributed computing.

The implementation of edge computing in IoT units offers

- Efficient safety, privateness, and reliability to the end-users.

- Decrease delay.

- Increased availability, and throughput response to functions & providers.

Moreover, as a result of edge units can deploy a collaborative method between the sensors, and the cloud, the info processing will be performed on the community edge as an alternative of being performed on the cloud platform. This can lead to efficient information administration, information persistence, efficient supply, and content material caching. Moreover, to implement IoT in functions that cope with H2M or Human to Machine interplay and fashionable healthcare edge computing offers a approach to enhance the community providers considerably.

Current analysis within the subject of IoT edge computing has demonstrated the potential to implement Machine Studying methods in a number of IoT use circumstances. Nonetheless, the key difficulty is that conventional machine studying fashions typically require sturdy computing & processing energy, and excessive reminiscence capability that limits the implementation of ML fashions in IoT units & functions.

Moreover, edge computing know-how at this time lacks in excessive transmission capability, and efficient energy financial savings that results in heterogeneous techniques which is the principle motive behind the requirement for harmonious & holistic infrastructure primarily for updating, coaching, and deploying ML fashions. The structure designed for embedded units poses one other problem as these architectures rely on the {hardware} & software program necessities that fluctuate from machine to machine. It’s the key motive why its tough to construct an ordinary ML structure for IoT networks.

Additionally, within the present state of affairs, the info generated by completely different units is distributed to cloud platforms for processing due to the computationally intensive nature of community implementations. Moreover, ML fashions are sometimes depending on Deep Studying, Deep Neural Networks, Software Particular Built-in Circuits (ASICs) and Graphic Processing Models (GPUs) for processing the info, they usually typically have a better energy & reminiscence requirement. Deploying full-fledged ML fashions on IoT units will not be a viable resolution due to the evident lack of computing & processing powers, and restricted storage options.

The demand to miniaturize low energy embedded units coupled with optimizing ML fashions to make them extra energy & reminiscence environment friendly has paved the way in which for TinyML that goals to implement ML fashions & practices on edge IoT units & framework. TinyML allows sign processing on IoT units and offers embedded intelligence, thus eliminating the necessity to switch information to cloud platforms for processing. Profitable implementation of TinyML on IoT units can in the end end in elevated privateness, and effectivity whereas lowering the working prices. Moreover, what makes TinyML extra interesting is that in case of insufficient connectivity, it might present on-premise analytics.

TinyML : Introduction and Overview

TinyML is a machine studying device that has the aptitude to carry out on-device analytics for various sensing modalities like audio, imaginative and prescient, and speech. Ml fashions construct on the TinyML device have low energy, reminiscence, and computing necessities that makes them appropriate for embedded networks, and units that function on battery energy. Moreover, TinyML’s low necessities makes it a really perfect match to deploy ML fashions on the IoT framework.

Within the present state of affairs, cloud-based ML techniques face a number of difficulties together with safety & privateness issues, excessive energy consumption, dependability, and latency issues which is why fashions on hardware-software platforms are pre-installed. Sensors collect the info that simulate the bodily world, and are then processed utilizing a CPU or MPU (Microprocessing unit). The MPU caters to the wants of ML analytic assist enabled by edge conscious ML networks and structure. Edge ML structure communicates with the ML cloud for switch of information, and the implementation of TinyML can lead to development of know-how considerably.

It might be secure to say that TinyML is an amalgamation of software program, {hardware}, and algorithms that work in sync with one another to ship the specified efficiency. Analog or reminiscence computing may be required to offer a greater & efficient studying expertise for {hardware} & IoT units that don’t assist {hardware} accelerators. So far as software program is worried, the functions constructed utilizing TinyML will be deployed & applied over platforms like Linux or embedded Linux, and over cloud-enabled software program. Lastly, functions & techniques constructed on the TinyML algorithm will need to have the assist of latest algorithms that want low reminiscence sized fashions to keep away from excessive reminiscence consumption.

To sum issues up, functions constructed utilizing the TinyML device should optimize ML rules & strategies together with designing the software program compactly, within the presence of high-quality information. This information then should be flashed by way of binary information which might be generated utilizing fashions which might be educated on machines with a lot bigger capability, and computing energy.

Moreover, techniques & functions working on the TinyML device should present excessive accuracy when performing below tighter constraints as a result of compact software program is required for small energy consumption that helps TinyML implications. Moreover, the TinyML functions or modules might rely on battery energy to assist its operations on edge embedded techniques.

With that being mentioned, TinyML functions have two basic necessities

- Skill to scale billions of low cost embedded techniques.

- Storing the code on the machine RAM with capability below a number of KBs.

Functions of TinyML Utilizing Superior Applied sciences

One of many main the reason why TinyML is a sizzling matter within the AI & ML business is due to its potential functions together with imaginative and prescient & speech primarily based functions, well being analysis, information sample compression & classification, brain-control interface, edge computing, phenomics, self-driving automobiles, and extra.

Speech Primarily based Functions

Speech Communications

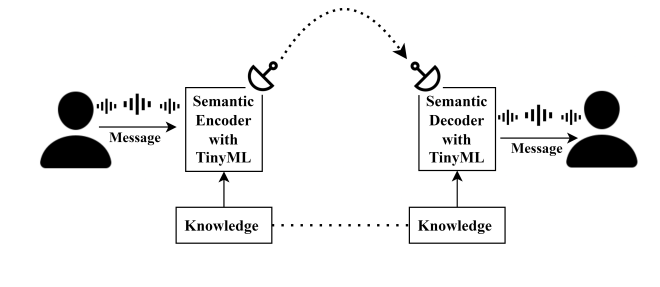

Usually, speech primarily based functions depend on typical communication strategies wherein all the info is vital, and it’s transmitted. Nonetheless, in recent times, semantic communication has emerged as a substitute for typical communication as in semantic communication, solely the that means or context of the info is transmitted. Semantic communication will be applied throughout speech primarily based functions utilizing TinyML methodologies.

A number of the hottest functions within the speech communications business at this time are speech detection, speech recognition, on-line studying, on-line instructing, and goal-oriented communication. These functions sometimes have a better energy consumption, they usually even have excessive information necessities on the host machine. To beat these necessities, a brand new TinySpeech library has been launched that enables builders to construct a low computational structure that makes use of deep convolutional networks to construct a low storage facility.

To make use of TinyML for speech enhancement, builders first addressed the sizing of the speech enhancement mannequin as a result of it was topic to {hardware} limitations & constraints. To sort out the difficulty, structured pruning and integer quantization for RNN or Recurrent Neural Networks speech enhancement mannequin have been deployed. The outcomes steered the scale of the mannequin to be diminished by nearly 12x whereas the operations to be diminished by nearly 3x. Moreover, it is important that sources should be utilized successfully particularly when deployed on useful resource constrained functions that execute voice-recognition functions.

Consequently, to partition the method, a co-design methodology was proposed for TinyML primarily based voice and speech recognition functions. The builders used windowing operation to partition software program & {hardware} in a solution to pre course of the uncooked voice information. The strategy appeared to work because the outcomes indicated a lower within the power consumption on the {hardware}. Lastly, there’s additionally potential to implement optimized partitioning between software program & {hardware} co-design for higher efficiency within the close to future.

Moreover, latest analysis has proposed using a phone-based transducer for speech recognition techniques, and the proposal goals to interchange LSTM predictors with Conv1D layer to cut back the computation wants on edge units. When applied, the proposal returned optimistic outcomes because the SVD or Singular Worth Decomposition had compressed the mannequin efficiently whereas using WFST or Weighted Finite State Transducers primarily based decoding resulted in additional flexibility in mannequin enchancment bias.

Quite a lot of distinguished functions of speech recognition like digital or voice assistants, stay captioning, and voice instructions use ML methods to work. In style voice assistants at present like Siri and the Google Assistant ping the cloud platform each time they obtain some information, and it creates important issues associated to privateness & information safety. TinyML is a viable resolution to the difficulty because it goals to carry out speech recognition on units, and get rid of the necessity to migrate information to cloud platforms. One of many methods to attain on-device speech recognition is to make use of Tiny Transducer, a speech recognition mannequin that makes use of a DFSMN or Deep Feed-Ahead Sequential Reminiscence Block layer coupled with one Conv1D layer as an alternative of the LSTM layers to carry down the computation necessities, and community parameters.

Listening to Aids

Listening to loss is a significant well being concern throughout the globe, and people capability to listen to sounds typically weakens as they age, and its a significant issues in nations coping with growing old inhabitants together with China, Japan, and South Korea. Listening to help units proper now work on the easy precept of amplifying all of the enter sounds from the encompassing that makes it tough for the individual to tell apart or differentiate between the specified sound particularly in a loud setting.

TinyML may be the viable resolution for this difficulty as utilizing a TinyLSTM mannequin that makes use of speech recognition algorithm for listening to help units will help the customers distinguish between completely different sounds.

Imaginative and prescient Primarily based Functions

TinyML has the potential to play an important position in processing laptop imaginative and prescient primarily based datasets as a result of for sooner outputs, these information units have to be processed on the sting platform itself. To attain this, the TinyML mannequin encounters the sensible challenges confronted whereas coaching the mannequin utilizing the OpenMV H7 microcontroller board. The builders additionally proposed an structure to detect American Signal Language with the assistance of a ARM Cortex M7 microcontroller that works solely with 496KB of frame-buffer RAM.

The implementation of TinyML for laptop imaginative and prescient primarily based software on edge platforms required builders to beat the key problem of CNN or Convolutional Neural Networks with a excessive generalization error, and excessive coaching & testing accuracy. Nonetheless, the implementation didn’t generalize successfully to photographs inside new use circumstances in addition to backgrounds with noise. When the builders used the interpolation augmentation methodology, the mannequin returned an accuracy rating of over 98% on take a look at information, and about 75% in generalization.

Moreover, it was noticed that when the builders used the interpolation augmentation methodology, there was a drop in mannequin’s accuracy throughout quantization, however on the similar time, there was additionally a lift in mannequin’s inference pace, and classification generalization. The builders additionally proposed a technique to additional enhance the accuracy of generalization mannequin coaching on information obtained from a wide range of completely different sources, and testing the efficiency to discover the potential for deploying it on edge platforms like transportable good watches.

Moreover, extra research on CNN indicated that its potential to deploy & obtain fascinating outcomes with CNN structure on units with restricted sources. Not too long ago, builders have been capable of develop a framework for the detection of medical face masks on a ARM Cortex M7 microcontroller with restricted sources utilizing TensorFlow lite with minimal reminiscence footprints. The mannequin dimension publish quantization was about 138 KB whereas the interference pace on the goal board was about 30 FPS.

One other software of TinyML for laptop imaginative and prescient primarily based software is to implement a gesture recognition machine that may be clamped to a cane for serving to visually impaired individuals navigate by way of their day by day lives simply. To design it, the builders used the gestures information set, and used the info set to coach the ProtoNN mannequin with a classification algorithm. The outcomes obtained from the setup have been correct, the design was low-cost, and it delivered passable outcomes.

One other important software of TinyML is within the self-driving, and autonomous autos business due to the shortage of sources, and on-board computation energy. To sort out the difficulty, builders launched a closed loop studying methodology constructed on the TinyCNN mannequin that proposed an internet predictor mannequin that captures the picture on the run-time. The main difficulty that builders confronted when implementing TinyML for autonomous driving was that the choice mannequin that was educated to work on offline information might not work equally effectively when coping with on-line information. To completely maximize the functions of autonomous automobiles and self-driving automobiles, the mannequin ought to ideally have the ability to adapt to the real-time information.

Knowledge Sample Classification and Compression

One of many largest challenges of the present TinyML framework is to facilitate it to adapt to on-line coaching information. To sort out the difficulty, builders have proposed a technique often known as TinyOL or TinyML On-line Studying to permit coaching with incremental on-line studying on microcontroller items thus permitting the mannequin to replace on IoT edge units. The implementation was achieved utilizing the C++ programming language, and a further layer was added to the TinyOL structure.

Moreover, builders additionally carried out the auto-encoding of the Arduino Nano 33 BLE sensor board, and the mannequin educated was capable of classify new information patterns. Moreover, the event work included designing environment friendly & extra optimized algorithms for the neural networks to assist machine coaching patterns on-line.

Analysis in TinyOL and TinyML have indicated that variety of activation layers has been a significant difficulty for IoT edge units which have constrained sources. To sort out the difficulty, builders launched the brand new TinyTL or Tiny Switch Studying mannequin to make the utilization of reminiscence over IoT edge units way more efficient, and avoiding using intermediate layers for activation functions. Moreover, builders additionally launched an all new bias module often known as “lite-residual module” to maximise the difference capabilities, and in course permitting characteristic extractors to find residual characteristic maps.

In comparison with full community fine-tuning, the outcomes have been in favor of the TinyTL structure because the outcomes confirmed the TinyTL to cut back the reminiscence overhead about 6.5 instances with average accuracy loss. When the final layer was fantastic tuned, TinyML had improved the accuracy by 34% with average accuracy loss.

Moreover, analysis on information compression has indicated that information compression algorithms should handle the collected information on a transportable machine, and to attain the identical, the builders proposed TAC or Tiny Anomaly Compressor. The TAC was capable of outperform SDT or Swing Door Trending, and DCT or Discrete Cosine Rework algorithms. Moreover, the TAC algorithm outperformed each the SDT and DCT algorithms by reaching a most compression price of over 98%, and having the superior peak signal-to-noise ratio out of the three algorithms.

Well being Analysis

The Covid-19 international pandemic opened new doorways of alternative for the implementation of TinyML because it’s now an important observe to repeatedly detect respiratory signs associated to cough, and chilly. To make sure uninterrupted monitoring, builders have proposed a CNN mannequin Tiny RespNet that operates on a multi-model setting, and the mannequin is deployed over a Xilinx Artix-7 100t FPGA that enables the machine to course of the data parallelly, has a excessive effectivity, and low energy consumption. Moreover, the TinyResp mannequin additionally takes speech of sufferers, audio recordings, and data of demography as enter to categorise, and the cough-related signs of a affected person are categorized utilizing three distinguished datasets.

Moreover, builders have additionally proposed a mannequin able to working deep studying computations on edge units, a TinyML mannequin named TinyDL. The TinyDL mannequin will be deployed on edge units like smartwatches, and wearables for well being analysis, and can also be able to finishing up efficiency evaluation to cut back bandwidth, latency, and power consumption. To attain the deployment of TinyDL on handheld units, a LSTM mannequin was designed and educated particularly for a wearable machine, and it was fed collected information because the enter. The mannequin has an accuracy rating of about 75 to 80%, and it was capable of work with off-device information as effectively. These fashions working on edge units confirmed the potential to resolve the present challenges confronted by the IoT units.

Lastly, builders have additionally proposed one other software to observe the well being of aged individuals by estimating & analyzing their physique poses. The mannequin makes use of the agnostic framework on the machine that enables the mannequin to allow validation, and speedy fostering to carry out variations. The mannequin applied physique pose detection algorithms coupled with facial landmarks to detect spatiotemporal physique poses in actual time.

Edge Computing

One of many main functions of TinyML is within the subject of edge computing as with the rise in using IoT units to attach units internationally, its important to arrange edge units as it would assist in lowering the load over the cloud architectures. These edge units will characteristic particular person information facilities that can permit them to hold out high-level computing on the machine itself, slightly than counting on the cloud structure. Consequently, it would assist in lowering the dependency on the cloud, cut back latency, improve consumer safety & privateness, and likewise cut back bandwidth.

Edge units utilizing the TinyML algorithms will assist in resolving the present constraints associated with energy, computing, and reminiscence necessities, and it’s mentioned within the picture under.

Moreover, TinyML also can improve the use and software of Unmanned Aerial Automobiles or UAVs by addressing the present limitations confronted by these machines. Using TinyML can permit builders to implement an energy-efficient machine with low latency, and excessive computing energy that may act as a controller for these UAVs.

Mind-Laptop Interface or BCI

TinyML has important functions within the healthcare business, and it might show to be extremely helpful in numerous areas together with most cancers & tumor detection, well being predictions utilizing ECG & EEG alerts, and emotional intelligence. Using TinyML can permit the Adaptive Deep Mind Stimulation or aDBS to adapt efficiently to scientific variations. Using TinyMl also can permit aDBS to determine disease-related bio marks & their signs utilizing invasive recordings of the mind alerts.

Moreover, the healthcare business typically contains the gathering of a considerable amount of information of a affected person, and this information then must be processed to succeed in particular options for the therapy of a affected person within the early phases of a illness. Consequently, it is important to construct a system that isn’t solely extremely efficient, but in addition extremely safe. Once we mix IoT software with the TinyML mannequin, a brand new subject is born named because the H-IoT or Healthcare Web of Issues, and the key functions of the H-IoT are analysis, monitoring, logistics, unfold management, and assistive techniques. If we wish to develop units which might be able to detecting & analyzing a affected person’s well being remotely, it’s important to develop a system that has a world accessibility, and a low latency.

Autonomous Automobiles

Lastly, TinyML can have widespread functions within the autonomous autos business as these autos will be utilized in numerous methods together with human monitoring, army functions, and has industrial functions. These autos have a main requirement of with the ability to determine objects effectively when the thing is being searched.

As of now, autonomous autos & autonomous driving is a reasonably complicated process particularly when growing mini or small sized autos. Current developments have proven potential to enhance the appliance of autonomous driving for mini autos by utilizing a CNN structure, and deploying the mannequin over the GAP8 MCI.

Challenges

TinyML is a comparatively newer idea within the AI & ML business, and regardless of the progress, it is nonetheless not as efficient as we want it for mass deployment for edge & IoT units.

The most important problem at present confronted by TinyML units is the ability consumption of those units. Ideally, embedded edge & IoT units are anticipated to have a battery life that extends over 10 years. For instance, in excellent situation, an IoT machine working on a 2Ah battery is meant to have a battery lifetime of over 10 years on condition that the ability consumption of the machine is about 12 ua. Nonetheless, within the given state, an IoT structure with a temperature sensor, a MCU unit, and a WiFi module, the present consumption stands at about 176.4 mA, and with this energy consumption, the battery will final for under about 11 hours, as an alternative of the required 10 years of battery life.

Useful resource Constraints

To keep up an algorithm’s consistency, it is important to take care of energy availability, and given the present state of affairs, the restricted energy availability to TinyML units is a crucial problem. Moreover, reminiscence limitations are additionally a major problem as deploying fashions typically requires a excessive quantity of reminiscence to work successfully, and precisely.

{Hardware} Constraints

{Hardware} constraints make deploying TinyML algorithms on a large scale tough due to the heterogeneity of {hardware} units. There are millions of units, every with their very own {hardware} specs & necessities, and resultantly, a TinyML algorithm at present must be tweaked for each particular person machine, that makes mass deployment a significant difficulty.

Knowledge Set Constraints

One of many main points with TinyML fashions is that they don’t assist the prevailing information units. It’s a problem for all edge units as they gather information utilizing exterior sensors, and these units typically have energy & power constraints. Subsequently, the prevailing information units can’t be used to coach the TinyML fashions successfully.

Remaining Ideas

The event of ML methods have prompted a revolution & a shift in perspective within the IoT ecosystem. The mixing of ML fashions in IoT units will permit these edge units to make clever selections on their very own with none exterior human enter. Nonetheless, conventionally, ML fashions typically have excessive energy, reminiscence, and computing necessities that makes them unify for being deployed on edge units which might be typically useful resource constrained.

Consequently, a brand new department in AI was devoted to using ML for IoT units, and it was termed as TinyML. The TinyML is a ML framework that enables even the useful resource constrained units to harness the ability of AI & ML to make sure larger accuracy, intelligence, and effectivity.

On this article, we’ve talked in regards to the implementation of TinyML fashions on resource-constrained IoT units, and this implementation requires coaching the fashions, deploying the fashions on the {hardware}, and performing quantization methods. Nonetheless, given the present scope, the ML fashions able to be deployed on IoT and edge units have a number of complexities, and restraints together with {hardware}, and framework compatibility points.