Language fashions and generative AI, famend for his or her capabilities, are a scorching matter within the AI business. World researchers are enhancing their efficacy and functionality. These programs, sometimes deep studying fashions, are pre-trained on in depth labeled knowledge, incorporating neural networks for self-attention. They use numerous layers—feedforward, recurrent, embedded, and a spotlight—to course of enter textual content and produce related outputs.

Principally, massive language fashions’ feedforward layers maintain probably the most parameters. Research present that these fashions use solely a fraction of accessible neurons for output computation throughout inference.

This text introduces UltraFastBERT, a BERT-based framework matching the efficacy of main BERT fashions however utilizing simply 0.3% of neurons throughout inference, particularly 12 out of 4095 in every layer. We’ll discover UltraFastBERT’s structure, performance, and outcomes. Let’s start.

Historically, a language mannequin employs totally different parts to equip itself with content material era capabilities together with feedforward layers, recurrent layers, embedded layers, and a spotlight layers. These parts are answerable for studying to acknowledge patterns throughout coaching, and in the end generate correct output on the premise of the enter texts. Every of those parts have some parameters, and in language fashions, a bulk of those parameters is held by the feedforward layers. Nevertheless, these feedforward layers don’t make the most of 100% of the neurons out there to them to generate output for each enter at interference time which results in wastage of sources that will increase complexity, computation time, and computational prices.

At its core, the UltraFastBERT framework is a variant of the BERT framework, builds on this idea, and replaces feedforward layers with sooner feedforward networks in its structure that in the end leads to the UltraFastBERT framework using solely 0.3% of the out there neurons whereas delivering outcomes similar to BERT fashions with the same measurement and coaching course of, particularly on the downstream duties. Resulting from its design implementations, the intermediate layers in UltraFastBERT framework is exponentially sooner,

Given a quick feedforward(FFF) community, and a feedforward(FF) community, every with n variety of neurons, the time complexity of a ahead cross in a feedforward community is O(n) whereas the time complexity is O(log2n) for a quick feedforward community, and the distinction in time complexity is primarily because of the reality in a quick feedforward community, the neurons are organized right into a balanced binary tree, and when the enter is supplied, the community executes just one department of the tree conditionally. Moreover, performing interference on a quick feedforward community leads to CMM or Conditional Matrix Multiplication, wherein the enter rows dot with the pure weight columns individually, and the output of the earlier dot-product operation determines the burden of the columns to proceed with. Resultantly, the community makes use of all of the neurons just for a number of inputs, and no enter requires quite a lot of neurons to be dealt with by the community. The CMM dot product contrasts the DMM or Dense Matrix Multiplication that computes the dot product of all inputs with all the burden columns.

To sum it up, UltraFastBERT is a BERT-based framework that gives outcomes similar to state-of-the-art BERT language fashions that

- Makes use of solely 0.3% of the out there neurons in the course of the interference stage, and engages simply 12 neurons out of a complete of 4095 neurons for every interference layer.

- Delivers robust efficiency similar to state-of-the-art BERT fashions by implementing fine-tuning methods on downstream duties.

- Supplies a local implementation of the CMM or Conditional Matrix Multiplication that varieties the bottom for the quick feedforward community, and in the end results in 78x speedup in efficiency when in comparison with native optimized DMM or Dense Matrix Multiplication.

Feed Ahead Neural Networks

A feedforward neural community is among the most easy synthetic neural networks that strikes the data in solely the ahead route, from the enter nodes to the output nodes by way of hidden nodes. One of many essential highlights of a quick ahead neural community is that there aren’t any loops or cycles in such networks, and they’re easier to assemble when in comparison with RNN or Recurrent Neural Networks, and CNN or Typical Neural Networks. The structure of a quick ahead neural community includes three parts particularly enter layers, hidden layers, and output layers, and each layer consists of models known as neurons, and every layer is interconnected to the opposite with the assistance of weights.

The neurons current within the enter layers obtain inputs, and forwards it to the subsequent layer. The quantity of neurons in every enter layer is decided by the dimension of the enter knowledge. Subsequent up, we have now the hidden layers that aren’t uncovered both to the enter or the output, and they’re answerable for the required computations. The neurons in every hidden layer take the weighted sum of the outputs given by the earlier layer, make use of an activation perform, and cross the end result to the subsequent layer, and the method repeats another time. Lastly, we have now the output layer that produces the output for the given inputs. Every neuron in each layer of a quick feedforward community is interconnected with each neuron within the subsequent layer, thus making FFF neural networks a totally related community. Weights are used to symbolize the power of connection between the neurons, and the community updates these weights to be taught the patterns by updating the weights on the premise of the error occurring within the output.

Transferring ahead, there are two key levels within the working of a quick feedforward neural community: the feedforward part, and the backpropagation part.

Feedforward Part

Within the feedforward part, the enter is fed to the community, and it then propagates ahead. The hidden layers then compute the weighted sum of the inputs, and introduce non-linearity within the mannequin by passing the sum of the inputs via an activation perform like ReLu, Sigmoid, and TanH. The method repeats another time till the weights attain the output layer, and the mannequin makes a prediction.

Backpropagation Part

As soon as the mannequin makes a prediction, it computes the error between the generated output, and the anticipated output. The error is then again propagated via the community, and the community makes use of a gradient descent optimization algorithm to regulate the weights in an try to attenuate the error.

UltraFastBERT : Mannequin Structure and Working

The UltraFastBERT framework is constructed on the crammedBERT structure, and the UltraFastBERT framework employs all of the parts of the crammedBERT framework besides the character of the intermediate layers. As an alternative, the UltraFastBERT framework replaces the transformer encoder within the feedforward networks contained within the intermediate layers of the crammedBERT framework with quick feedforward networks. The UltraFastBERT framework makes the next adjustments to the unique feedforward networks.

- The framework eliminates the distinction between leaf, and non-leaf nodes by utilizing the GeLu activation perform throughout nodes, and equipping these nodes with output weights, and eradicating output biases in its entirety. Submit this, the framework fixes the leaf measurement to 1.

- Lastly, the framework permits a number of quick feedforward community bushes in parallel by collectively computing the intermediate output layers. The framework manages to do that computation by taking a sum of particular person bushes, after which presents the sum because the intermediate output layer.

Transferring alongside, in coaching, the UltraFastBERT framework follows the coaching process employed by the crammedBERT framework that features disabling the dropout in pretraining, and utilizing the 1-cycle triangular studying price schedule. The mannequin is then fine-tuned to maximise its efficiency on a big selection of duties primarily of the GLUE benchmark for a complete of 5 epochs.

Interference

Interference is a crucial half for a quick feedforward community, and these quick feedforward networks in themselves type a significant chunk of enormous language fashions, and they’re identified for his or her distinctive acceleration potential. To know this acceleration potential, let’s take an instance of one of the crucial superior language fashions, the GPT-3 wherein the feedforward networks in each transformer layer include over 49,100 neurons. If trainable, a quick feedforward community(most depth of 15) might exchange the unique feedforward community. The launched quick feedforward community could have over 65,000 neurons, however it would solely make the most of 16 of those neurons for interference, which quantities to roughly 0.03% of the neurons out there to GPT-3.

Algorithm and Compatibility

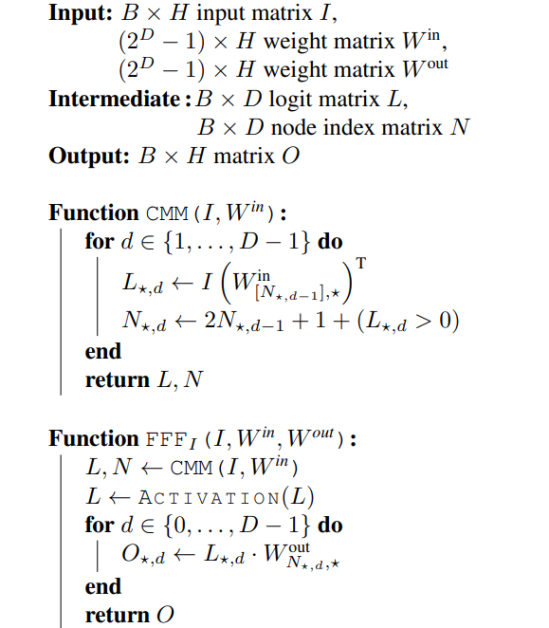

The UltraFastBERT framework makes use of a recursive pseudocode algorithm for quick feedforward interference, and the algorithm is depicted within the picture under.

Right here, B represents the batch measurement, H represents the width of the enter layers, and M represents columns. One other main reason behind concern with using a Computational Matrix Multiplication method is whether or not it makes the quick feedforward networks incompatible with the method that’s already in use for Dense Matrix Multiplication and current Deep Studying frameworks. Happily, using CMM doesn’t have an effect on the efficiency or introduces incompatibility, though it does enhance the caching complexity.

It’s important to notice that as part of the quick feedforward community, single-threaded Dense Matrix Multiplication depends on executing the MAC or Multiplication and Accumulation directions, and resultantly, changing DMM with CMM method will profit CPUs as a result of fewer MAC directions are wanted to compute the layer output per factor. Subsequently, regardless of using a conditionality that’s often related to branching, the “neural branching” acts as an addition to the reminiscence offset to related pointers within the framework. Subsequently, within the UltraFastBERT framework, the instruction department prediction is rarely totally engaged to facilitate the conditionality of the CMM, and solely hundreds the related columns of the burden matrix individually. Moreover, because the framework performs row-column dot merchandise, the SIMD or single instruction a number of knowledge vector parallel processing continues to be a great possibility to hurry up the interference implementations for particular gadgets.

UltraFastBERT : Efficiency and Outcomes

We’ll speak concerning the efficiency of the UltraFastBERT framework for fine-tuning in addition to for interference duties to investigate how the framework fares towards state-of-the-art language fashions.

Advantageous-Tuning Outcomes

The next determine demonstrates the efficiency of varied fashions on GLUE-dev check datasets. Right here, N represents the variety of neurons out there to the frameworks for coaching, “Avg” represents the typical rating of all duties.

As it may be clearly seen, the UltraFastBERT framework that has been educated on the A6000 GPU for over 24 hours manages to retain virtually 96% of the predictive efficiency on GLUE downstream duties when in comparison with the unique BERT framework. Moreover, it may also be seen that with a rise within the depth of the quick feedforward networks, the efficiency of the frameworks witness a decline, though nearly all of efficiency degradation happens just for the CoLa process. If the CoLa process is disregarded for some time, the UltraFastBERT framework returns a predictive efficiency rating of about 98.6%.

Interference Outcomes

On this part, we’ll examine the efficiency of a number of feedforward or quick feedforward networks on interference implementations, and these implementations are unfold throughout three ranges.

- In Degree 1 implementation, the implementation is constructed utilizing BLAS Degree 1 routines particularly scalar-vector product, and vector-vector dot merchandise.

- In Degree 2, the implementations make use of BLAS Degree 2 routines particularly batched scalar-vector product, and batched matrix-vector dot merchandise.

- In Degree 3, the implementations make use of the non-batched BLAS Degree 3 matrix-matrix multiplication method, and though it’s the quickest implementation out there for feedforward networks, such implementations will not be out there for quick feedforward networks as a result of the library doesn’t help the vector-level sparsity of the Computational Matrix Multiplication.

Moreover, the UltraFastBERT framework deploys GPU implementations by utilizing both customized CUDA or PyTorch kernels.

The above desk, compares the efficiency of the UltraFastBERT framework with its predecessors, the BERT-based frameworks when it comes to feedforward and quick feedforward layers the place each column accommodates the relative inference Quick Feedforward over Feedforward implementation speedups when they’re making use of the identical linear-algebraic routine primitives.

Nevertheless, it’s value noting that the speedups reported within the above desk are meant for “honest comparisons” i.e each the quick feedforward and feedforward implementations make use of an identical linear-algebraic routine primitive operations. Moreover, on Degree 1 and Degree 2, the implementations of the quick feedforward networks are able to performing the interference 48x and 78x faster than the quickest feedforward implementation respectively.

Remaining Ideas

On this article, we have now talked concerning the UltraFastBERT, a variant of the BERT framework, builds on the idea that feedforward layers don’t make the most of 100% of the neurons out there to them to generate output for each enter at interference time which results in wastage of sources that will increase complexity, computation time, and computational prices, and replaces feedforward layers with sooner feedforward networks in its structure that in the end leads to the UltraFastBERT framework using solely 0.3% of the out there neurons whereas delivering outcomes similar to BERT fashions with the same measurement and coaching course of, particularly on the downstream duties.

Resulting from its design implementations, the intermediate layers in UltraFastBERT framework are exponentially sooner. Moreover, the robust efficiency delivered by the UltraFastBERT framework is a proof that LLMs can ship robust efficiency by participating solely a fraction of their parameters for particular person interferences, because the UltraFastBERT framework makes use of solely 0.3% of the out there neurons throughout interference, and but manages to attain 78x speedup over interference occasions.